By Tanya Tang, Andrew Mehrmann

At Netflix, the significance of ML observability can’t be overstated. ML observability refers back to the means to observe, perceive, and acquire insights into the efficiency and conduct of machine studying fashions in manufacturing. It entails monitoring key metrics, detecting anomalies, diagnosing points, and guaranteeing fashions are working reliably and as meant. ML observability helps groups determine information drift, mannequin degradation, and operational issues, enabling sooner troubleshooting and steady enchancment of ML techniques.

One particular space the place ML observability performs an important function is in fee processing. At Netflix, we attempt to make sure that technical or process-related fee points by no means turn into a barrier for somebody wanting to enroll or proceed utilizing our service. By leveraging ML to optimize fee processing, and utilizing ML observability to observe and clarify these choices, we will scale back fee friction. This ensures that new members can subscribe seamlessly and present members can renew with out trouble, permitting everybody to take pleasure in Netflix with out interruption.

ML Observability is a set of practices and instruments to assist ML practitioners and stakeholders alike acquire a deeper, finish to finish understanding of their ML techniques throughout all levels of its lifecycle, from improvement to deployment to ongoing operations. An efficient ML Observability framework not solely facilitates automated detection and surfacing of points but in addition offers detailed root trigger evaluation, appearing as a guardrail to make sure ML techniques carry out reliably over time. This permits groups to iterate and enhance their fashions quickly, scale back time to detection for incidents, whereas additionally rising the buy-in and belief of their stakeholders by offering wealthy context in regards to the system’s’ behaviors and affect.

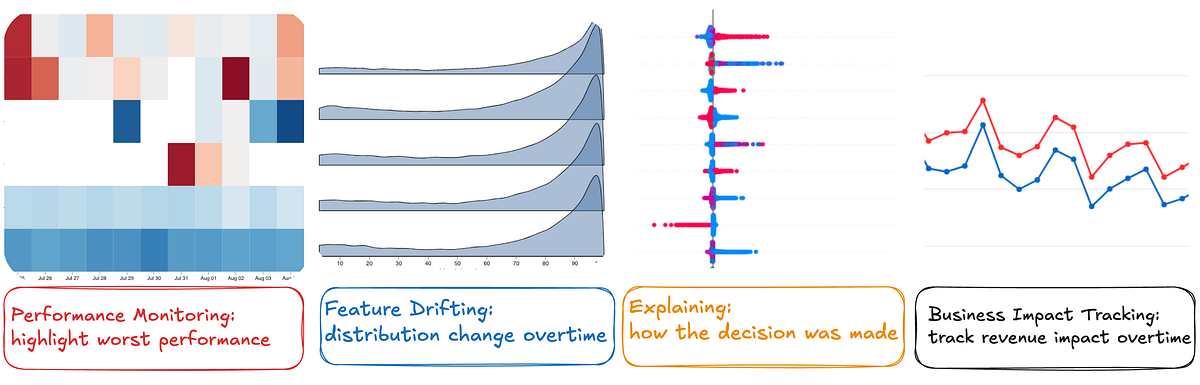

Some examples of those instruments embrace long-term manufacturing efficiency monitoring and evaluation, characteristic, goal, and prediction drift monitoring, automated information high quality checks, and mannequin explainability. For instance, observability system would detect aberrations in enter information, the characteristic pipeline, predictions, and outcomes as properly present perception into the probably causes of mannequin choices and/or efficiency.

As an ML portfolio grows, ad-hoc monitoring turns into more and more difficult. Better complexity additionally raises the chance of interactions between totally different mannequin parts, making it unrealistic to deal with every mannequin as an remoted black field. At this stage, investing strategically in observability is crucial — not solely to help the present portfolio, but in addition to arrange for future progress.

In an effort to reliably and responsibly evolve our funds processing system to be more and more ML-driven, we invested closely up-front in ML observability options. To offer confidence to our enterprise stakeholders via this evolution, we regarded past technical metrics akin to precision and recall and positioned higher emphasis on real-world outcomes like “how a lot site visitors did we ship down this route” and “the place are the areas that ML is underperforming.”

Utilizing this as a guidepost, we designed a group of interconnected modules for machine studying observability: logging, monitoring, and explaining.

In an effort to help the monitoring and explaining we wished to do, we first wanted to log the suitable information. This appears apparent and trivial, however as standard the satan is within the particulars: what fields precisely do we have to log and when? How does this work for easy fashions vs. extra complicated ones? What about fashions which might be truly product of a number of fashions?

Take into account the next, comparatively simple mannequin. It takes some enter information, creates options, passes these to a mannequin which creates some rating between 0 and 1, after which that rating is translated into a call (say, whether or not to course of a card as Debit or Credit score).

There are a number of parts chances are you’ll want to log: a singular identifier for every report that’s skilled and scored, the uncooked information, the ultimate options that fed the mannequin, a singular identifier for the mannequin, the characteristic importances for that mannequin, the uncooked mannequin rating, the cutoffs used to map a rating to a call, timestamps for the choice in addition to the mannequin, and so on.

To deal with this, we drafted an preliminary information schema that might allow our numerous ML observability initiatives. We recognized the next logical entities to be essential for the observability initiatives we have been pursuing:

The purpose of monitoring is twofold: 1) allow self-serve analytics and a couple of) present opinionated views into key mannequin insights. It may be useful to consider this as “enterprise analytics in your fashions,” as numerous the important thing ideas (on-line analytical processing cubes, visualizations, metric definitions, and so on) carry over. Following this analogy, we will craft key metrics that assist us perceive our fashions. There are a number of concerns when defining metrics, together with whether or not your wants are understanding real-world mannequin conduct versus offline mannequin metrics, and whether or not your viewers are ML practitioners or mannequin stakeholders.

Because of our explicit wants, our bias for metrics is towards on-line, stakeholder-focused metrics. On-line metrics inform us what truly occurred in the actual world, quite than in an idealized counterfactual universe which may have its personal biases. Moreover, our stakeholders’ focus is on enterprise outcomes, so our metrics are usually outcome-focused quite than summary and technical mannequin metrics.

We centered on easy, straightforward to elucidate metrics:

These metrics start to counsel causes for altering traits within the mannequin’s conduct over time, in addition to extra typically how the mannequin is performing. This provides us an general view of mannequin well being and an intuitive approximation of what we expect the mannequin ought to have completed. For instance, if fee processor A begins receiving extra site visitors in a sure market in comparison with fee processor B, you may ask:

- Has the mannequin seen one thing to make it choose processor A?

- Have extra transactions turn into eligible to go to processor A?

Nonetheless, to really clarify particular choices made by the mannequin, particularly which options are liable for present traits, we have to use extra superior explainability instruments, which will likely be mentioned within the subsequent part.

Explainability means understanding the “why” behind ML choices. This may imply the “why” in mixture (e.g. why are so a lot of our transactions all of a sudden taking place one explicit route) or the “why” for a single occasion (e.g. what components led to this explicit transaction being routed a specific means). This provides us the power to approximate the earlier establishment the place we may examine our static guidelines for insights about route quantity.

One of the crucial efficient instruments we will leverage for ML explainability is SHAP (Shapley Additive exPlanations, Lundberg & Lee 2017). At a excessive degree, SHAP values are derived from cooperative recreation idea, particularly the Shapley values idea. The core concept is to pretty distribute the “payout” (in our case, the mannequin’s prediction) among the many “gamers” (the enter options) by contemplating their contribution to the prediction.

Key Advantages of SHAP:

- Mannequin-Agnostic: SHAP may be utilized to any ML mannequin, making it a flexible device for explainability.

- Constant and Native Explanations: SHAP offers constant explanations for particular person predictions, serving to us perceive the contribution of every characteristic to a particular resolution.

- International Interpretability: By aggregating SHAP values throughout many predictions, we will acquire insights into the general conduct of the mannequin and the significance of various options.

- Mathematical properties: SHAP satisfies essential mathematical axioms akin to effectivity, symmetry, dummy, and additivity. These properties enable us to compute explanations on the particular person degree and mixture them for any ad-hoc teams that stakeholders are concerned with, akin to nation, issuing financial institution, processor, or any mixtures thereof.

Due to the above benefits, we leverage SHAP as one core algorithm to unpack a wide range of fashions and open the black field for stakeholders. Its well-documented Python interface makes it straightforward to combine into our workflows.

Explaining Advanced ML Techniques

For ML techniques that rating single occasions and use the output scores straight for enterprise choices, explainability is comparatively simple, because the manufacturing resolution is straight tied to the mannequin’s output. Nonetheless, within the case of a bandit algorithm, explainability may be extra complicated as a result of the bandit coverage could contain a number of layers, which means the mannequin’s output is probably not the ultimate resolution utilized in manufacturing. For instance, we could have a classifier mannequin to foretell the chance of transaction approval for every route, however we would wish to penalize sure routes on account of greater processing charges.

Right here is an instance of a plot we constructed to visualise these layers. The site visitors that the mannequin would have chosen by itself is on the left, and totally different penalty or guardrail layers affect closing quantity as you progress left to proper. For instance, the mannequin initially allotted 22% site visitors to processor W with Configuration A, nonetheless for price and contractual concerns, the site visitors was diminished to 19% with 3% being allotted to Processor W with Configuration B, and Processor Nc with Configuration B.

Whereas particular person occasion evaluation is essential, akin to in fraud detection the place false positives should be scrutinized, in fee processing, stakeholders are extra concerned with explaining mannequin choices at a bunch degree (e.g., choices for one issuing financial institution from a rustic). That is important for enterprise conversations with exterior events. SHAP’s mathematical properties enable for versatile aggregation on the group degree whereas sustaining consistency and accuracy.

Moreover, because of the multi-candidate construction, when stakeholders inquire about why a specific candidate was chosen, they’re typically within the differential perspective — particularly, why one other comparable candidate was not chosen. We leverage SHAP to phase populations into cohorts that share the identical candidates and determine the options that make refined however vital variations. For instance, whereas Function A is likely to be globally essential, if we evaluate two candidates that each have the identical worth for Function A, the native variations turn into essential. This facilitates stakeholders discussions and helps perceive refined variations amongst totally different routes or fee companions.

Earlier, we have been alerted that our ML mannequin constantly diminished site visitors to a specific route each Tuesday. By leveraging our rationalization system, we recognized that two options — route and day of the week — have been contributing negatively to the predictions on Tuesdays. Additional evaluation revealed that this route had skilled an outage on a earlier Tuesday, which the mannequin had discovered and encoded into the route and day of the week options. This raises an essential query: ought to outage information be included in mannequin coaching? This discovery opens up discussions with stakeholders and offers alternatives to additional improve our ML system.

The reason system not solely demystifies our machine studying fashions but in addition fosters transparency and belief amongst our stakeholders, enabling extra knowledgeable and assured decision-making.

At Netflix, we face the problem of routing hundreds of fee transactions per minute in our mission to entertain the world. To assist meet this problem, we launched an observability framework and set of instruments to permit us to open the ML black field and perceive the intricacies of how we route billions of {dollars} of transactions in tons of of nations yearly. This has led to an enormous operational complexity discount along with improved transaction approval charge, whereas additionally permitting us to deal with innovation quite than operations.

Wanting forward, we’re generalizing our resolution with a standardized information schema. It will simplify making use of our superior ML observability instruments to different fashions throughout numerous domains. By creating a flexible and scalable framework, we purpose to empower ML builders to shortly deploy and enhance fashions, carry transparency to stakeholders, and speed up innovation.

We additionally thank Karthik Chandrashekar, Zainab Mahar Mir, Josh Karoly and Josh Korn for his or her useful ideas.