Authors: Adrian Taruc and James Dalton

That is the primary entry of a multi-part weblog sequence describing how we constructed a Actual-Time Distributed Graph (RDG). In Half 1, we are going to focus on the motivation for creating the RDG and the structure of the info processing pipeline that populates it.

Introduction

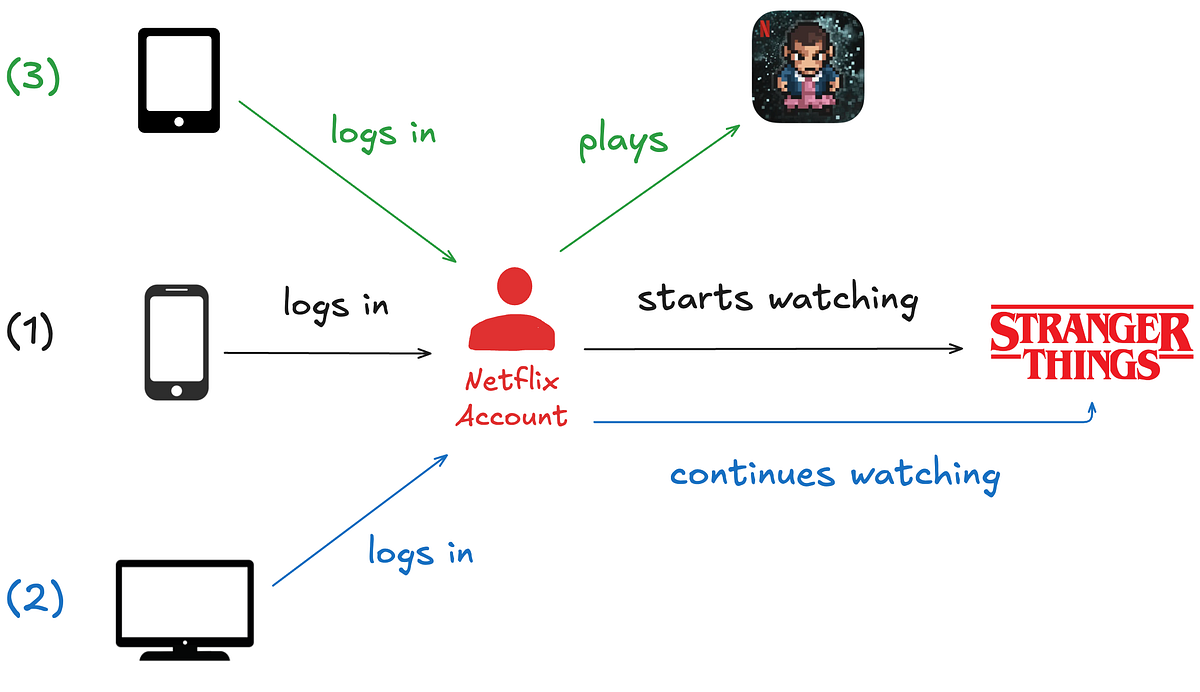

The Netflix product expertise traditionally consisted of a single core providing: streaming video on demand. Our members logged into the app, browsed, and watched titles akin to Stranger Issues, Squid Recreation, and Bridgerton. Though that is nonetheless the core of our product, our enterprise has modified considerably over the previous few years. For instance, we launched ad-supported plans, stay programming occasions (e.g., Jake Paul vs. Mike Tyson and NFL Christmas Day Video games), and cellular video games as a part of a Netflix subscription. This evolution of our enterprise has created a brand new class of issues the place now we have to investigate member interactions with the app throughout totally different enterprise verticals. Let’s stroll by way of a easy instance state of affairs:

- Think about a Netflix member logging into the app on their smartphone and starting to look at an episode of Stranger Issues.

- Ultimately, they resolve to look at on a much bigger display screen, in order that they log into the app on a sensible TV of their residence and proceed watching the identical episode.

- Lastly, after finishing the episode, they log into the app on their pill and play the sport “Stranger Issues: 1984”.

We wish to know that these three actions belong to the identical member, regardless of occurring at totally different instances and throughout varied gadgets. In a standard information warehouse, these occasions would land in no less than two totally different tables and could also be processed at totally different cadences. However in a graph system, they grow to be related virtually immediately. Finally, analyzing member interactions within the app throughout domains empowers Netflix to create extra customized and interesting experiences.

Within the early days of our enterprise enlargement, discovering these relationships and contextual insights was extraordinarily troublesome. Netflix is legendary for adopting a microservices structure — lots of of microservices developed and maintained by lots of of particular person groups. Some notable advantages of microservices are:

- Service Decomposition: The general platform is separated into smaller providers, every answerable for a selected enterprise functionality. This modularity permits for impartial service improvement, deployment, and scaling.

- Knowledge Isolation: Every service manages its personal information, lowering interdependencies. This enables groups to decide on essentially the most appropriate information schemas and storage applied sciences for his or her providers.

Nonetheless, these advantages additionally led to drawbacks for our information science and engineering companions. In follow, the separation of enterprise considerations and repair improvement in the end resulted in a separation of information. Manually stitching information collectively from our information warehouse and siloed databases was an onerous activity for our companions. Our information engineering group acknowledged we would have liked an answer to course of and retailer our monumental swath of interconnected information whereas enabling quick querying to find insights. Though we may have structured the info in varied methods, we in the end settled on a graph illustration. We consider a graph provides key benefits, particularly:

- Relationship-Centric Queries: Graphs allow quick “hops” throughout a number of nodes and edges with out costly joins or handbook denormalization that may be required in table-based information fashions.

- Flexibility as Relationships Develop: As new connections and entities emerge, graphs can rapidly adapt with out important schema adjustments or re-architecture.

- Sample and Anomaly Detection: Our stakeholders’ use circumstances typically require figuring out hidden relationships, cycles, or groupings within the information — capabilities far more naturally expressed and effectively executed utilizing graph traversals than siloed level lookups.

For this reason we got down to construct a Actual-Time Distributed Graph, or “RDG” for brief.

Ingestion and Processing

Three important layers within the system energy the RDG:

- Ingestion and Processing — obtain occasions from disparate upstream information sources and use them to generate graph nodes and edges.

- Storage — write nodes and edges to persistent information shops.

- Serving — expose methods for inside purchasers to question graph nodes and edges.

The remainder of this put up will give attention to the primary layer, whereas subsequent posts on this weblog sequence will cowl the opposite layers. The diagram beneath depicts a high-level overview of the ingestion and processing pipeline:

Constructing and updating the RDG in real-time requires repeatedly processing huge volumes of incoming information. Batch processing techniques and conventional information warehouses can not provide the low latency wanted to keep up an up-to-date graph that helps real-time functions. We opted for a stream processing structure, enabling us to replace the graph’s information as occasions occur, thus minimizing delay and guaranteeing the system displays the most recent member actions with the Netflix app.

Kafka because the Ingestion Spine

Member actions within the Netflix app are revealed to our API Gateway, which then writes them as data to Apache Kafka subjects. Kafka is the mechanism by way of which inside information functions can eat these occasions. It supplies sturdy, replayable streams that downstream processors, akin to Apache Flink jobs, can eat in real-time.

Our group’s functions eat a number of totally different Kafka subjects, every producing as much as roughly 1 million messages per second. Matter data are encoded within the Apache Avro format, and Avro schemas are persevered in an inside centralized schema registry. With the intention to strike a stability between sustaining information availability and managing the monetary bills of storage infrastructure, we tailor retention insurance policies for every subject in accordance with its throughput and file measurement. We additionally persist subject data to Apache Iceberg information warehouse tables, which permits us to backfill information in situations the place older information is now not accessible within the Kafka subjects.

Processing Knowledge with Apache Flink

The occasion data within the Kafka streams are ingested by Flink jobs. We selected Flink due to its robust capabilities round near-real-time occasion processing. There may be additionally strong inside platform help for Flink inside Netflix, which permits jobs to combine with Kafka and varied storage backends seamlessly. At a excessive stage, the anatomy of an RDG Flink job seems like this:

For the sake of simplicity, the diagram above depicts a primary circulation by which a member logs into their Netflix account and begins watching an episode of Stranger Issues. Studying the diagram from left to proper:

- The actions of logging into the app and watching the Stranger Issues episode are in the end written as occasions to Kafka subjects.

- The Flink job consumes occasion data from the upstream Kafka subjects.

- Subsequent, now we have a sequence of Flink processor features that:

- Apply filtering and projections to take away noise based mostly on the person fields which can be current — or in some circumstances, not current — within the occasions.

- Enrich occasions with extra metadata, that are saved and accessed by the processor features through aspect inputs.

- Rework occasions into graph primitives — nodes representing entities (e.g., member accounts and present/film titles), and edges representing relationships or interactions between them. On this instance, the diagram solely reveals a couple of nodes and an edge to maintain issues easy. Nonetheless, in actuality, we create and replace up to a couple dozen totally different nodes and edges, relying on the member actions that occurred throughout the Netflix app.

- Buffer, detect, and deduplicate overlapping updates that happen to the identical nodes and edges inside a small, configurable time window. This step reduces the info throughput we publish downstream. It’s carried out utilizing stateful course of features and timers.

- Publish nodes and edges data to Knowledge Mesh, an abstraction layer that connects information functions and storage techniques. We write a complete (nodes + edges) of greater than 5 million data per second to Knowledge Mesh, which handles persisting the data to numerous information shops that different inside providers can question.

From One Job to Many: Scaling Flink the Exhausting Manner

Initially, we tried having only one Flink job that consumed all of the Kafka supply subjects. Nonetheless, this rapidly grew to become an enormous operational headache since totally different subjects can have totally different information volumes and throughputs at totally different instances throughout the day. Consequently, tuning the monolithic Flink job grew to become extraordinarily troublesome — we struggled to seek out CPU, reminiscence, job parallelism, and checkpointing interval configurations that ensured job stability.

As an alternative, we pivoted to having a 1:1 mapping from the Kafka supply subject to the consuming Flink job. Though this led to extra operational overhead because of extra jobs to develop and deploy, every job has been a lot easier to keep up, analyze, and tune.

Equally, every node and edge sort is written to a separate Kafka subject. This implies now we have considerably extra Kafka subjects to handle. Nonetheless, we determined the tradeoff of getting bespoke tuning and scaling per subject was value it. We additionally designed the graph information mannequin to be as generic and versatile as doable, so including new varieties of nodes and edges could be an rare operation.

Acknowledgements

We might be remiss if we didn’t give a particular shout-out to our gorgeous colleagues who work on the inner Netflix information platform. Constructing the RDG was a multi-year effort that required us to design novel options, and the investments and foundations from our platform groups had been essential to its profitable creation. You make the lives of Netflix information engineers a lot simpler, and the RDG wouldn’t exist with out your diligent collaboration!

—

Thanks for studying the primary season of the RDG weblog sequence; keep tuned for Season 2, the place we are going to go over the storage layer containing the graph’s varied nodes and edges.