By Alex Hutter and Bartosz Balukiewicz

Our earlier weblog posts (half 1, half 2, half 3) detailed how Netflix’s Graph Search platform addresses the challenges of looking out throughout federated information units inside Netflix’s enterprise ecosystem. Though extremely scalable and simple to configure, it nonetheless depends on a structured question language for enter. Pure language primarily based search has been potential for a while, however the degree of effort required was excessive. The emergence of readily-available AI, particularly Giant Language Fashions (LLMs), has created new alternatives to combine AI search options, with a smaller funding and improved accuracy.

Whereas Textual content-to-Question and Textual content-to-SQL are established issues, the complexity of distributed Graph Search information within the GraphQL ecosystem necessitates modern options. That is the primary in a three-part sequence the place we’ll element our journey: how we applied these options, evaluated their efficiency, and finally developed them right into a self-managed platform.

The Want for Intuitive Search: Addressing Enterprise and Product Calls for

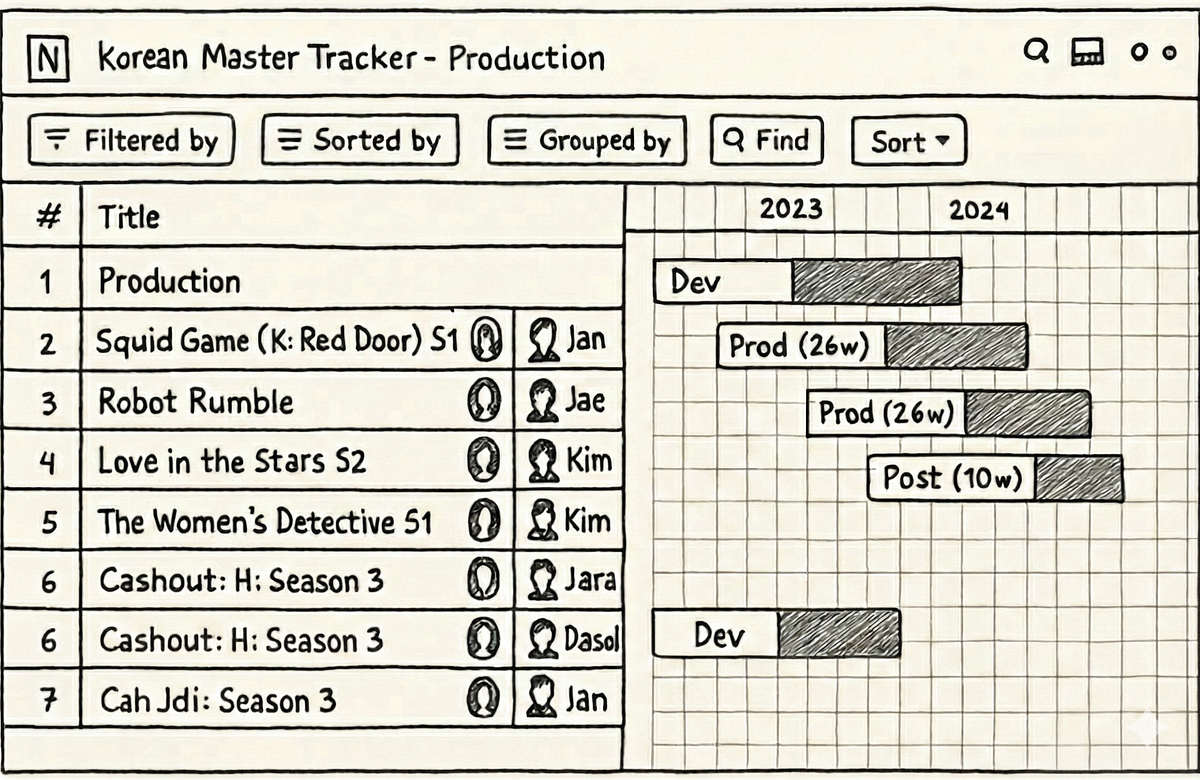

Pure language search is the flexibility to make use of on a regular basis language to retrieve data versus advanced, structured question languages just like the Graph Search Filter Area Particular Language (DSL). When customers work together with 100’s of varied UIs throughout the suite of Content material and Enterprise Merchandise functions, a frequent process is filtering a knowledge desk just like the one under:

Ideally, a consumer merely needs to fulfill a question like “I need to see all motion pictures from the 90s about robots from the US.” As a result of the underlying platform operates on the Graph Search Filter DSL, the appliance acts as an middleman. Customers enter their necessities by means of UI components — toggling sides or utilizing question builders — and the system programmatically converts these interactions into a sound DSL question to filter the information.

This course of presents a number of points.

Immediately, many functions have bespoke elements for accumulating consumer enter — the expertise varies throughout them and so they have inconsistent assist for the DSL. Customers must “study” how one can use every software to attain their objectives.

Moreover, some domains have tons of of fields in an index that may very well be faceted or filtered by. A subject material knowledgeable (SME) might know precisely what they need to accomplish, however be bottlenecked by the inefficient tempo of filling out a big scale UI kind and translating their questions with a view to encode it in a illustration Graph Search wants.

Most significantly, customers suppose and function utilizing pure language, not technical constructs like question builders, elements, or DSLs. By requiring them to modify contexts, we introduce friction that slows them down and even prevents their progress.

With readily-available AI elements, our customers can now work together with our methods by means of pure language. The problem now’s to ensure our providing, looking out Netflix’s advanced enterprise state with pure language, is an intuitive and reliable expertise.

We’ve decided to pursue producing Graph Search Filter statements from pure language to satisfy this want. Our intention is to reinforce and never exchange present functions with retrieval augmented era (RAG), offering tooling and capabilities in order that functions in our ecosystem have newly accessible technique of processing and presenting their information of their distinct area flavours. It must be famous that each one the work right here has direct software to constructing a RAG system on high of Graph Search sooner or later.

Underneath the Hood: Our Strategy to Textual content-to-Question

The core operate of the text-to-query course of is changing a consumer’s (usually ambiguous) pure language query right into a structured question. We primarily obtain this by means of using an LLM.

Earlier than we dive deeper, let’s shortly revisit the construction of Graph Search Filter DSL. Every Graph Search index is outlined by a GraphQL question, made up of a set of fields. Every area has a kind e.g. boolean, string, and a few have their permitted values ruled by managed vocabularies — a standardized and ruled listing of values (like an enumeration, or a overseas key). The names of these fields can be utilized to assemble expressions utilizing comparability (e.g. > or ==) or inclusion/exclusion operators (e.g. IN). In flip these expressions may be mixed utilizing logical operators (e.g. AND) to assemble advanced statements.

With that understanding, we will now extra rigorously outline the conversion course of. We want the LLM to generate a Graph Search Filter DSL assertion that’s syntactically, semantically, and pragmatically right.

Syntactic correctness is simple — does it parse? To be syntactically right, the generated assertion have to be properly fashioned i.e. observe the grammar of the Graph Search Filter DSL.

Semantic correctness provides some extra complexity because it requires extra data of the index itself. To be semantically right:

- it should respect the sector varieties i.e. solely use comparisons that make sense given the underlying kind;

- it should solely use fields which might be truly current within the index, i.e. doesn’t hallucinate;

- when the values of a area are constrained to a managed vocabulary, any comparability should solely use values from that managed vocabulary.

Pragmatic correctness is far more troublesome. It asks the query: does the generated filter truly seize the intent of the consumer’s question?

The next sections will element how we pre-process the consumer’s query to create applicable context for the directions that we’ll present to the LLM — each of that are elementary to LLM interplay — in addition to post-processing we carry out on the generated assertion to validate it, and assist customers perceive and belief the outcomes they obtain.

At a excessive degree that course of appears like this:

Context Engineering

Preparation for the filter era process is predominantly engineering the suitable context. The LLM will want entry to the fields of an index and their metadata with a view to assemble semantically right filters. Because the indices are outlined by GraphQL queries, we will use the kind data from the GraphQL schema to derive a lot of the required data. For some fields, there may be extra data we will present past what’s out there within the schema as properly, specifically permissible values that pull from managed vocabularies.

Every area within the index is related to metadata as seen under, and that metadata is supplied as a part of the context.

- The area is derived from the doc path as characterised by the GraphQL question.

- The description is the remark from the GraphQL schema for the sector.

- The kind is derived from the GraphQL schema for the sector e.g. Boolean, String, enum. We additionally assist a further managed vocabulary kind we’ll focus on extra of shortly.

- The legitimate values are derived from enum values for the enum kind or from a managed vocabulary as we’ll now focus on.

A managed vocabulary is a selected area kind that consists of a finite set of allowed values, that are outlined by a SMEs or area house owners. Index fields may be related to a selected managed vocabulary, e.g. nations with members comparable to Spain and Thailand, and any utilization of that area inside a generated assertion should check with values from that vocabulary.

Naively offering all of the metadata as context to the LLM labored for easy instances however didn’t scale. Some indices have tons of of fields and a few managed vocabularies have hundreds of legitimate values. Offering all of these, particularly the managed vocabulary values and their accompanying metadata, expands the context; this proportionally will increase latency and reduces the correctness of generated filter statements. Not offering the values wasn’t an choice as we would have liked to floor the LLMs generated statements- with out them, the LLM would steadily hallucinate values that didn’t exist.

Curating the context to an applicable subset was an issue we addressed utilizing the well-known RAG sample.

Subject RAG

As talked about beforehand, some indices have tons of of fields, nonetheless, most consumer’s questions usually refer solely to a handful of them. If there was no price in together with all of them, we might, however as talked about prior, there’s a price when it comes to the latency of question era in addition to the correctness of the generated question (e.g. needle-in-the-hackstack downside) and non-deterministic outcomes.

To find out which subset of fields to incorporate within the context, we “match” them in opposition to the intent of the consumer’s query.

- Embeddings are created for index fields and their metadata (title, description, kind) and are listed in a vector retailer

- At filter era time, the consumer’s query is chunked with an overlapping technique. For every chunk, we carry out a vector search to establish the highest Okay most related values and the fields to which they belong.

- Deduplication: The highest Okay fields from every chunk are each consolidated and deduplicated earlier than being supplied as context to the system directions.

Managed Vocabularies RAG

Index fields of the managed vocabulary kind are related to a selected managed vocabulary, once more, nations are one instance. Given a consumer’s query, we will infer whether or not or not it refers to values of a selected managed vocabulary. In flip, by understanding which managed vocabulary values are current, we will establish extra, associated index fields that must be included within the context that won’t have been recognized by the sector RAG step.

Get Netflix Know-how Weblog’s tales in your inbox

Be a part of Medium free of charge to get updates from this author.

Every managed vocabulary worth has:

- a singular identifier inside its kind;

- a human readable show title;

- a description of the worth;

- also-known-as values or AKA show names, e.g. “romcom” for “Romantic Comedy”.

To find out which subset of values to incorporate within the context for managed vocabulary fields (and in addition probably infer extra fields), we “match” them in opposition to the consumer’s query.

- Embeddings are created for managed vocabulary values and their metadata, and these are listed in a vector retailer. The managed vocabularies can be found by way of GraphQL and are often fetched and reindexed so this method stays updated with any adjustments within the area.

- At filter era time, the consumer’s query is chunked. For every chunk, we carry out a vector search to establish the highest Okay most related values (however just for the managed vocabularies which might be related to fields within the index)

- The highest Okay values from every chunk are deduplicated by their managed vocabulary kind. The related area definition is then injected into the context together with the matched values.

Combining each approaches, the RAG of fields and managed vocabularies, we find yourself with the answer that every enter query resolves in out there and matched fields and values:

The standard of outcomes generated by the RAG device may be considerably enhanced by tuning its numerous parameters, or “levers.” These embody methods for reranking, chunking, and the number of completely different embedding era fashions. The cautious and systematic analysis of those elements would be the focus of the following components of this sequence.

The Directions

As soon as the context is constructed, it’s supplied to the LLM with a set of directions and the consumer’s query. The directions may be summarised as follows: “Given a pure language query, generate a syntactically, semantically, and pragmatically right filter assertion given the provision of the next index fields and their metadata.”

- So as to generate a syntactically right filter assertion, the directions embody the syntax guidelines of the DSL.

- So as to generate a semantically right filter assertion, the directions inform the LLM to floor the generated assertion within the supplied context.

- So as to generate a pragmatically right filter assertion, up to now we deal with higher context engineering to make sure that solely probably the most related fields and values are supplied. We haven’t recognized any directions that make the LLM simply “do higher” at this side of the duty.

After the filter assertion is generated by the LLM, we deterministically validate it previous to returning the values to the consumer.

Validation

Syntactic Correctness

Syntactic correctness ensures the LLM output is a parsable filter assertion. We make the most of an Summary Syntax Tree (AST) parser constructed for our customized DSL. If the generated string fails to parse into a sound AST, we all know instantly that the question is malformed and there’s a elementary subject with the era.

The opposite strategy to unravel this downside may very well be utilizing the structured outputs modes supplied by some LLMs. Nevertheless, our preliminary analysis yielded combined outcomes, because the customized DSL will not be natively supported and requires additional work.

Semantic Correctness

Regardless of cautious context engineering utilizing the RAG sample, the LLM generally hallucinates each fields and out there values within the generated filter assertion. Essentially the most easy means of stopping this phenomenon is validating the generated filters in opposition to out there index metadata. This strategy doesn’t impression the general latency of the system, as we’re already working with an AST of the filter assertion, and the metadata is freely out there from the context engineering stage.

If a hallucination is detected it may be returned as an error to a consumer, indicating the necessity to refine the question, or may be supplied again to the LLM within the type of a suggestions loop for self correction.

This will increase the filter era time, so must be used cautiously with a restricted variety of retries.

Constructing Confidence

You in all probability observed we’re not validating the generated filter for pragmatic correctness. That process is the toughest problem: The filter parses (syntactic) and makes use of actual fields (semantic), however is it what the consumer meant? When a consumer searches for “Darkish”, do they imply the precise German sci-fi sequence Darkish, or are they looking for the temper class “darkish TV reveals”?

The hole between what a consumer supposed and the generated filter assertion is usually attributable to ambiguity. Ambiguity stems from the compression of pure language. A consumer says “German time-travel thriller with the lacking boy and the cave” however the index incorporates discrete metadata fields like releaseYear, genreTags, and synopsisKeywords.

How can we guarantee customers aren’t inadvertently led to improper solutions or to solutions for questions they didn’t ask?

Exhibiting Our Work

A method we’re dealing with ambiguity is by exhibiting our work. We visualise the generated filters within the UI in a user-friendly means permitting them to very clearly see if the reply we’re returning is what they have been searching for to allow them to belief the outcomes..

We can not present a uncooked DSL string (e.g., origin.nation == ‘Germany’ AND style.tags CONTAINS ‘Time Journey’ AND synopsisKeywords LIKE ‘*cave*’) to a non-technical consumer. As an alternative, we replicate its underlying AST into UI elements.

After the LLM generates a filter assertion, we parse it into an AST, after which map that AST to the prevailing “Chips” and “Sides” in our UI (see under). If the LLM generates a filter for origin.nation == ‘Germany’, the consumer sees the “Nation” dropdown pre-selected to “Germany.” This offers customers fast visible suggestions and the flexibility to simply fine-tune the question utilizing commonplace UI controls when the outcomes want enchancment or additional experimentation.

Specific Entity Choice

One other technique we’ve developed to take away ambiguity occurs at question time. We give customers the flexibility to constrain their enter to check with recognized entities utilizing “@mentions”. Much like Slack, typing @ lets them seek for entities instantly from our specialised UI Graph Search element, giving them quick access to a number of managed vocabularies (plus different figuring out metadata like launch yr) to really feel assured they’re selecting the entity they intend.

If a consumer varieties, “When was @darkish produced”, we explicitly know they’re referring to the Sequence managed vocabulary, permitting us to bypass the RAG inference step and hard-code that context, considerably growing pragmatic correctness (and constructing consumer belief within the course of).

Finish-to-end structure

As talked about beforehand, the answer structure is split into pre-processing, filter assertion era, after which post-processing phases. The pre-processing handles context constructing and entails a RAG sample for similarity search, whereas the post-processing validation stage checks the correctness of the LLM-generated filter statements and gives visibility into the outcomes for finish customers. This design strategically balances LLM involvement with extra deterministic methods.

The top-to-end course of is as follows:

- A consumer’s pure language query (with non-obligatory `@mentions` statements) are supplied as enter, together with the Graph Search index context

- The context is scoped through the use of the RAG sample on each fields and potential values

- The pre-processed context and the query are fed into the LLM with an instruction asking for a syntactically and semantically right filter assertion

- The generated filer assertion DSL is verified and checked for hallucinations

- The ultimate response incorporates the associated AST with a view to construct “Chips” and “Sides”

Abstract

By combining our present Graph Search infrastructure with the ability and suppleness of LLMs, we’ve bridged the hole between advanced filter statements and consumer intent. We moved from requiring customers to talk our language (DSL) to our methods understanding theirs.

The preliminary problem for our customers was efficiently addressed. Nevertheless, our subsequent steps contain remodeling this method right into a complete and expandable platform, rigorously evaluating its efficiency in a stay manufacturing setting, and increasing its capabilities to assist GraphQL-first consumer interfaces. These matters, and others, would be the focus of the following installments on this sequence. Make sure to observe alongside!

You will have observed that we have now much more to do on this undertaking, together with named entity recognition and extraction, intent detection so we will route inquiries to the suitable indices, and question rewriting amongst others. If this sort of work pursuits you, attain out! We’re hiring in our Warsaw workplace, verify for open roles right here.

Credit

Particular because of Alejandro Quesada, Yevgeniya Li, Dmytro Kyrii, Razvan-Gabriel Gatea, Orif Milod, Michal Krol, Jeff Balis, Charles Zhao, Shilpa Motukuri, Shervine Amidi, Alex Borysov, Mike Azar, Bernardo Gomez Palacio, Haoyuan He, Eduardo Ramirez, Cynthia Xie.