Avneesh Saluja, Santiago Castro, Bowei Yan, Ashish Rastogi

Introduction

Netflix’s core mission is to attach tens of millions of members all over the world with tales they’ll love. This requires not simply an unbelievable catalog, but in addition a deep, machine-level understanding of each piece of content material in that catalog, from the largest blockbusters to essentially the most area of interest documentaries. As we onboard new kinds of content material equivalent to dwell occasions and podcasts, the necessity to scalably perceive these nuances turns into much more important to our productions and member-facing experiences.

Many of those media-related duties require refined long-form video understanding e.g., figuring out refined narrative dependencies and emotional arcs that span complete episodes or movies. Earlier work has discovered that to really grasp the content material’s essence, our fashions should leverage the total multimodal sign. For instance, the audio soundtrack is an important, non-visual modality that may assist extra exactly establish clip-level tones or when a brand new scene begins. Can we use our assortment of reveals and flicks to discover ways to a) fuse modalities like audio, video, and subtitle textual content collectively and b) develop strong representations that leverage the narrative construction that’s current in lengthy type leisure? Consisting of tens of tens of millions of particular person photographs throughout a number of titles, our various but entertainment-specific dataset supplies the proper basis to coach multimodal media understanding fashions that allow many capabilities throughout the corporate equivalent to advertisements relevancy, clip reputation prediction, and clip tagging.

For these causes, we developed the Netflix Media Foundational Mannequin (MediaFM), our new, in-house, multimodal content material embedding mannequin. MediaFM is the primary tri-modal (audio, video, textual content) mannequin pretrained on parts of the Netflix catalog. Its core is a multimodal, Transformer-based encoder designed to generate wealthy, contextual embeddings¹ for photographs from our catalog by studying the temporal relationships between them by means of integrating visible, audio, and textual data. The ensuing shot-level embeddings are highly effective representations designed to create a deeper, extra nuanced, and machine-readable understanding of our content material, offering the important spine for efficient chilly begin of newly launching titles in suggestions, optimized promotional property (like artwork and trailers), and inside content material evaluation instruments.

Enter Illustration & Preprocessing

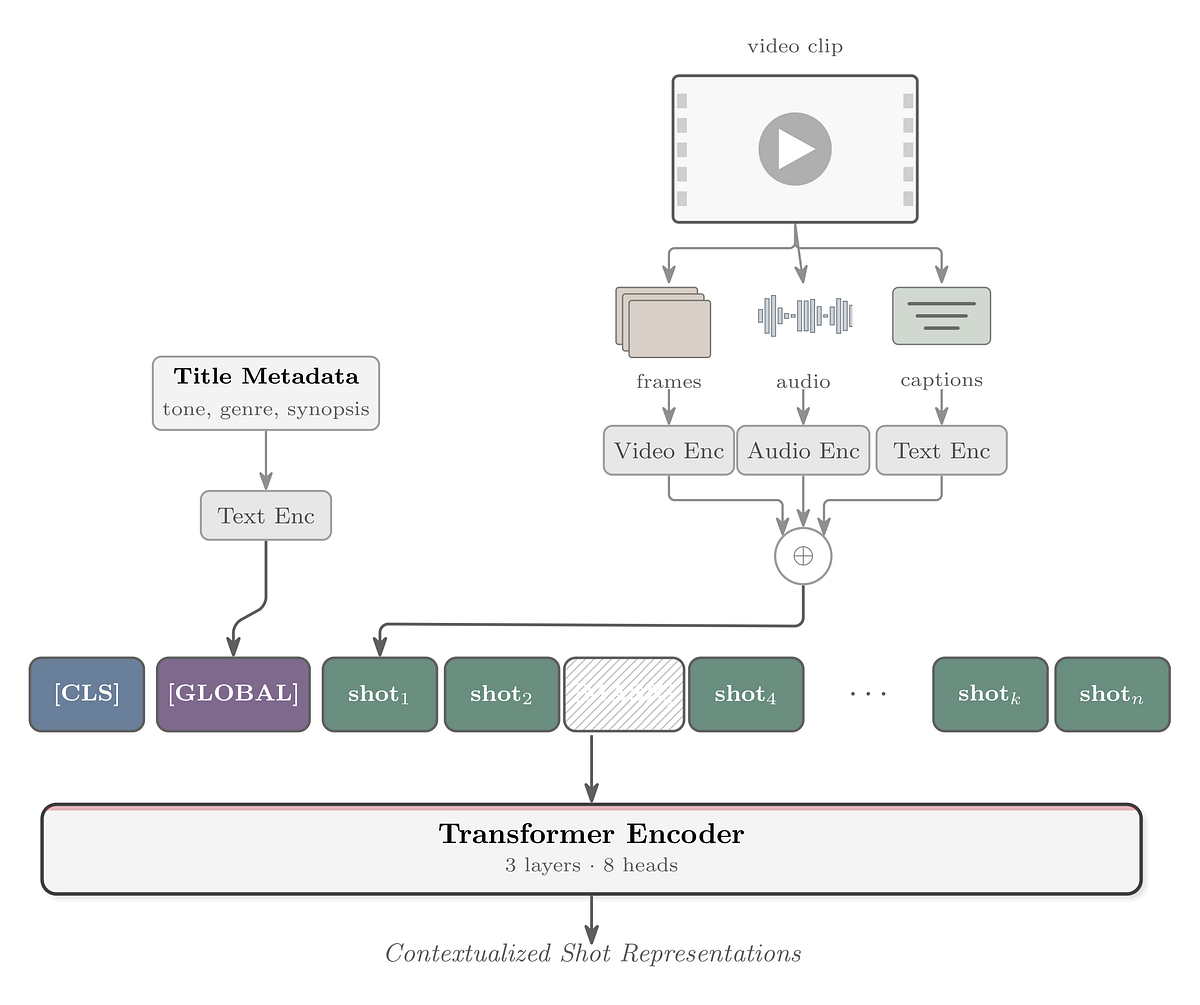

The mannequin’s basic unit of enter is a shot, derived by segmenting a film or episode (collectively known as “title”) utilizing a shot boundary detection algorithm. For every shot, we generate three distinct embeddings from its core modalities:

- Video: an inside mannequin known as SeqCLIP (a CLIP-style mannequin fine-tuned on video retrieval datasets) is used to embed frames sampled at uniform intervals from segmented photographs

- Audio: the audio samples from the identical photographs are embedded utilizing Meta FAIR’s wav2vec2

- Timed Textual content: OpenAI’s

text-embedding-3-largemannequin is used to encode the corresponding timed textual content (e.g., closed captions, audio descriptions, or subtitles) for every shot

For every shot, the three embeddings² are concatenated and unit-normed to type a single 2304-dimensional fused embedding vector. The transformer encoder is skilled on sequences of photographs, so every instance in our dataset is a temporally-ordered sequence of those fused embeddings from the identical film or episode (as much as 512 photographs per sequence). We even have entry to title-level metadata which is used to offer world context for every sequence (by way of the [GLOBAL]token). The title-level embedding is computed by passing title-level metadata (equivalent to synopses and tags) by means of the text-embedding-3-large mannequin.

Mannequin Structure and Coaching Goal

The core of our mannequin is a transformer encoder, architecturally just like BERT. A sequence of preprocessed shot embeddings is handed by means of the next levels:

- Enter Projection: The fused shot embeddings are first projected right down to the mannequin’s hidden dimension by way of a linear layer.

- Sequence Development & Particular Tokens: Earlier than getting into the Transformer, two particular embeddings are prepended to the sequence:

• a learnable[CLS]embedding is added on the very starting.

• the title-level embedding is projected to the mannequin’s hidden dimension and inserted after the[CLS]token because the[GLOBAL]token, offering title-level context to each shot within the sequence and taking part within the self-attention course of. - Contextualization: The sequence is enhanced with positional embeddings and fed by means of the Transformer stack to offer shot representations primarily based on their surrounding context.

- Output Projection: The contextualized hidden states from the Transformer are handed by means of a closing linear layer, projecting them from the hidden layers again as much as the 2304-dimensional fused embedding area for prediction.

We practice the mannequin utilizing a Masked Shot Modeling (MSM) goal. On this self-supervised job, we randomly masks 20% of the enter shot embeddings in every sequence by changing them with a learnable [MASK] embedding. The mannequin’s goal is to foretell the unique, unmasked fused embedding for these masked positions. The mannequin is optimized by minimizing the cosine distance between its predicted embedding and the ground-truth embedding for every masked shot.

Get Netflix Expertise Weblog’s tales in your inbox

Be part of Medium without spending a dime to get updates from this author.

We optimized the hidden parameters with Muon and the remaining parameters with AdamW. It’s price noting that the swap to Muon resulted in noticeable enhancements.

Analysis

To guage the discovered embeddings, we study task-specific linear layers on high of frozen representations (i.e., linear probes). A lot of the duties are clip-level, i.e., every instance is a brief clip starting from a number of seconds to a minute which are sometimes introduced to our members whereas recommending a title to them. When embedding these clips, we discover that “embedding in context”, specifically extracting the embeddings from inside a bigger sequence (e.g., the episode containing the clip), naturally does a lot better than embedding solely the photographs from a clip.

Duties

Our embeddings are foundational and we discover that they create worth to functions throughout Netflix. Listed below are a number of:

- Advert Relevancy: A multilabel classification job to categorize Netflix clips for related advert placement, measured by Common Precision. On this job, these representations function on the retrieval stage, the place they assist in figuring out the candidate set and in flip are fed into the advert serving system for relevance optimization.

- Clip Recognition Rating: A rating job to foretell the relative efficiency (in click-through price, CTR) of a media clip relative to different clips from that present or film, measured by a ten-fold with Kendall’s tau correlation coefficient.

- Clip Tone: A multi-label classification of hook clips into 100 tone classes (e.g., creepy, scary, humorous) from our inside Metadata & Rankings group, measured by micro Common Precision (averaged throughout tone classes).

- Clip Style: A multi-label classification of clips into eleven core genres (Motion, Anime, Comedy, Documentary, Drama, Fantasy, Horror, Youngsters, Romance, Sci-fi, Thriller) derived from the style of the guardian title, measured by macro Common Precision (averaged throughout genres).

- Clip Retrieval: a binary classification of clips from motion pictures or episodes into “clip-worthy” (i.e., a very good clip to showcase the title) or not, as decided by human annotators, and as measured by Common Precision. The constructive to unfavourable clip ratio is 1:3, and for every title we choose 6–10 constructive clips and the corresponding variety of negatives.

It’s price noting that for the duties above (in addition to different duties that use our mannequin), the mannequin outputs are utilized as data that the related groups use when driving to a call fairly than being utilized in a very end-to-end trend. Most of the enhancements are additionally in varied levels of deployment.

Outcomes

Determine 2³ compares MediaFM to a number of sturdy baselines:

On all duties, MediaFM is best than the baselines. Enhancements appear to be bigger for duties that require extra detailed narrative understanding e.g., predicting essentially the most related advertisements for an advert break given the encircling context. We glance additional into this subsequent.

Ablations

MediaFM’s main enhancements over earlier Netflix work stem from two key areas: combining a number of modalities and studying to contextualize shot representations. To find out the contribution of every issue throughout completely different duties, we in contrast MediaFM to a baseline. This baseline concatenates the three enter embeddings, primarily offering the identical full, shot-level enter as MediaFM however with out the contextualization step. This comparability permits us to isolate which duties profit most from the contextualization side.

Further modalities assist considerably for tone however the primary enchancment comes from contextualization.

Oddly, a number of uncontextualized modalities hurts the clip reputation rating mannequin, however including contextualization considerably improves efficiency.

For clip retrieval we see a pure development of round 15% for every enchancment.

Subsequent Steps

MediaFM presents a technique to discover ways to fuse and/or contextualize shot-level data by leveraging Netflix’s catalog in a self-supervised method. With this angle, we’re actively investigating how pretrained multimodal (audio, video/picture, textual content) LLMs like Qwen3-Omni, the place the modality fusion has already been discovered, can present an excellent stronger place to begin for subsequent mannequin generations.

Subsequent on this collection of weblog posts, we are going to current our methodology to embed title-level metadata and adapt it to our wants. Keep tuned!

Footnotes

- We selected embeddings over generative textual content outputs to prioritize modular design. This supplies a tighter, cleaner abstraction layer: we generate the illustration as soon as, and it’s consumed throughout our complete suite of companies. This avoids the architectural fragility of fine-tuning, permitting us to boost our current embedding-based workflows with new modalities extra flexibly.

- All of our information has audio and video; we zero-pad for lacking timed textual content information, which is comparatively prone to happen (e.g., in photographs with out dialogue).

- The title-level duties couldn’t be evaluated with the VertexAI MM and Marengo embedding fashions because the movies exceed the size restrict set by the APIs.

Acknowledgements

We wish to thank Matt Thanabalan and Chaitanya Ekanadham for his or her contributions to this work.