By: Kris Vary, Ankush Gulati, Jim Isaacs, Jennifer Shin, Jeremy Kelly, Jason Tu

That is half 3 in a sequence referred to as “Behind the Streams”. Try half 1 and half 2 to study extra.

Image this: It’s seconds earlier than the most important combat evening in Netflix historical past. Sixty-five million followers are ready, units in hand, hearts pounding. The countdown hits zero. What does it take to get everybody to the motion on time, each time? At Netflix, we’re used to on-demand viewing the place everybody chooses their very own second. However with stay occasions, hundreds of thousands are keen to hitch in directly. Our job: be certain our members by no means miss a beat.

When Reside occasions break streaming information ¹ ² ³, our infrastructure faces the last word stress take a look at. Right here’s how we engineered a discovery expertise for a worldwide viewers excited to see a knockout.

Why are Reside Occasions Totally different?

In contrast to Video on Demand (VOD), members wish to catch stay occasions as they occur. There’s one thing uniquely thrilling about being a part of the second. Meaning we solely have a short window to suggest a Reside occasion at simply the appropriate time. Too early, pleasure fades; too late, the second is missed. Each second counts.

To seize that pleasure, we enhanced our advice supply programs to serve real-time strategies, offering members richer and extra compelling indicators to hit play within the second when it issues most. The problem? Sending dynamic, well timed updates concurrently to over 100 million units worldwide with out making a thundering herd impact that might overwhelm our cloud providers. Merely scaling up linearly isn’t environment friendly and dependable. For widespread occasions, it may additionally divert sources from different important providers. We would have liked a wiser and extra scalable answer than simply including extra sources.

Orchestrating the second: Actual-time Suggestions

With hundreds of thousands of units on-line and stay occasion schedules that may shift in actual time, the problem was to maintain everybody completely in sync. We got down to clear up this by constructing a system that doesn’t simply react, however adapts by dynamically updating suggestions because the occasion unfolds. We recognized the necessity to steadiness three constraints:

- Time: the period required to coordinate an replace.

- Request throughput: the capability of our cloud providers to deal with requests.

- Compute cardinality: the number of requests essential to serve a novel replace.

We solved this constraint optimization downside by splitting the real-time suggestions into two phases: prefetching and real-time broadcasting. First, we prefetch the required knowledge forward of time, distributing the load over an extended interval to keep away from visitors spikes. When the Reside occasion begins or ends, we broadcast a low cardinality message to all linked units, prompting them to make use of the prefetched knowledge regionally. The timing of the printed additionally adapts when occasion occasions shift to protect accuracy with the manufacturing of the Reside occasion. By combining these two phases, we’re in a position to hold our members’ units in sync and clear up the thundering herd downside. To maximise gadget attain, particularly for these with unstable networks, we use “a minimum of as soon as” broadcasts to make sure each gadget will get the most recent updates and might compensate for any beforehand missed broadcasts as quickly as they’re again on-line.

The primary part optimizes request throughput and compute cardinality by prefetching materialized suggestions, displayed title metadata, and art work for a Reside occasion. As members naturally browse their units earlier than the occasion, this knowledge is prepopulated and saved regionally in gadget cache, awaiting the notification set off to serve the suggestions instantaneously. By distributing these requests naturally over time forward of the occasion, we will remove any associated visitors spikes and keep away from the necessity for large-scale, real-time system scaling.

The second part optimizes request throughput and time to replace units by broadcasting a low-cardinality, real-time message to all linked units at important moments in a Reside occasion’s lifecycle. Every broadcast payload features a state key and a timestamp. The state key signifies the present stage of the Reside occasion, permitting units to make use of their pre-fetched knowledge to replace cached responses regionally with out extra server requests. The timestamp ensures that if a tool misses a broadcast on account of community points, it will probably catch up by replaying missed updates upon reconnecting. This mechanism ensures units obtain updates a minimum of as soon as, considerably rising supply reliability even on unstable networks.

Second in Numbers: Throughout peak load, we have now efficiently delivered updates at a number of levels of our occasions to over 100 million units in below a minute.

Below the Hood: How It Works

With the massive image in thoughts, let’s look at how these items work together in apply.

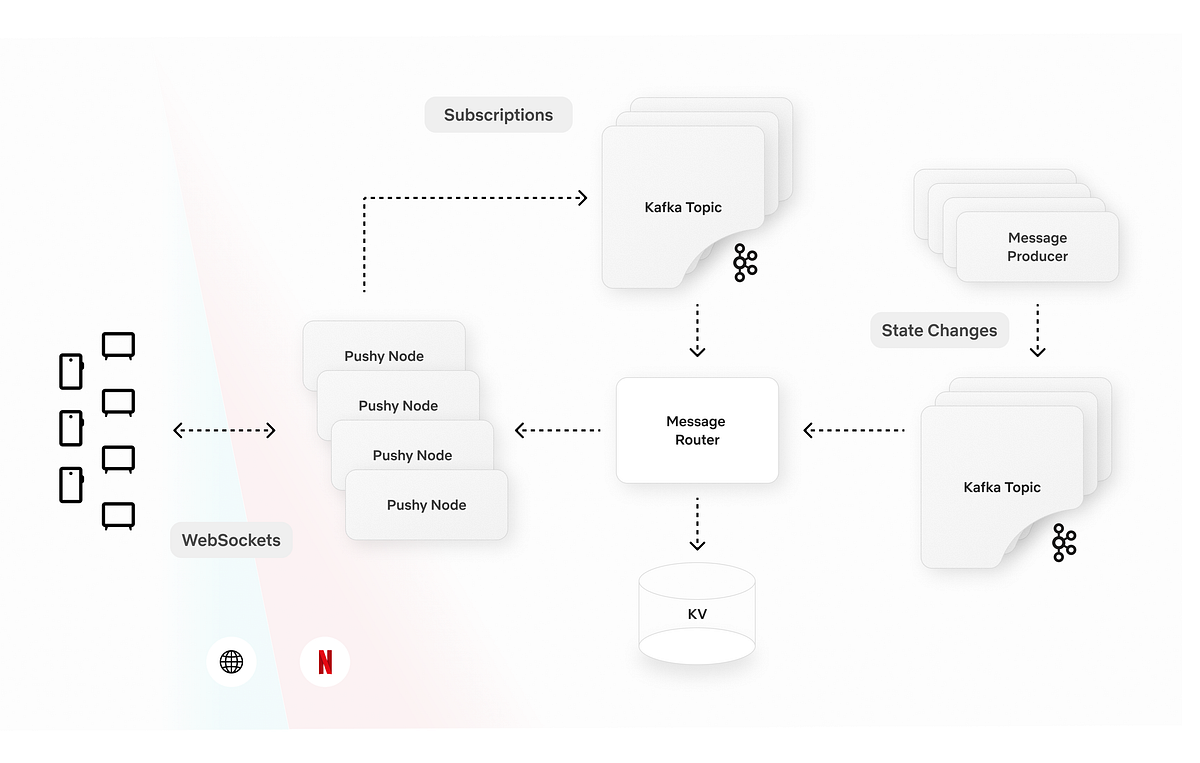

Within the diagram beneath, the Message Producer microservice centralizes all the enterprise logic. It constantly screens stay occasions for setup and timing adjustments. When it detects an replace, it schedules broadcasts to be despatched at exactly the appropriate second. The Message Producer additionally standardizes communication by offering a concise GraphQL schema for each gadget queries and broadcast payloads.

Somewhat than sending broadcasts on to units by way of WebSocket, the Message Producer palms them off to the Message Router. The Message Router is a part of a sturdy two-tier pub/sub structure constructed on confirmed applied sciences like Pushy (our WebSocket proxy), Apache Kafka, and Netflix’s KV key-value retailer. The Message Router tracks subscriptions on the Pushy node granularity, whereas Pushy nodes map the subscriptions to particular person connections, making a low-latency fanout that minimizes compute and bandwidth necessities.

Units interface with our GraphQL Area Graph Service (DGS). These schemas provide a number of question interfaces for prefetching, permitting units to tailor their requests to the precise expertise being introduced. Every response adheres to a constant API that resolves to a map of stage keys, enabling quick lookups and retaining enterprise logic off the gadget. Our broadcast schema specifies WebSocket connection parameters, the present occasion stage, and the timestamp of the final broadcast message. When a tool receives a broadcast, it injects the payload instantly into its cache, triggering a right away replace and re-render of the interface.

Balancing the Second: Throughput Administration

Along with constructing the brand new know-how to assist real-time suggestions, we additionally evaluated our current programs for potential visitors hotspots. Utilizing high-watermark visitors projections for stay occasions, we generated artificial visitors to simulate game-day eventualities and noticed how our on-line providers dealt with these bursts. By means of this course of, a number of frequent patterns emerged:

Breaking the Cache Synchrony

Our game-day simulations revealed that whereas our strategy mitigated the instant thundering herd dangers pushed by member visitors in the course of the occasions, stay occasions launched surprising mini thundering herds in our programs hours earlier than and after the precise occasions. The surge of members becoming a member of simply in time for these occasions led to concentrated cache expirations and recomputations, which created visitors spikes effectively exterior the occasion window that we didn’t anticipate. This was not an issue for VOD content material as a result of the member visitors patterns are quite a bit smoother. We discovered that fastened TTLs brought on cache expirations and refresh-traffic spikes to occur unexpectedly. To handle this, we added jitter to server and consumer cache expirations to unfold out refreshes and easy out visitors spikes.

Adaptive Visitors Prioritization

Whereas our providers already leverage visitors prioritization and partitioning primarily based on elements comparable to request kind and gadget kind, stay occasions launched a definite problem. These occasions generated temporary visitors bursts that had been intensely spiky and positioned vital pressure on our programs. By means of simulations, we acknowledged the necessity for an extra event-driven layer of visitors administration.

To deal with this, we improved our visitors sharding methods by utilizing event-based indicators. This enabled us to route stay occasion visitors to devoted clusters with extra aggressive scaling insurance policies. We additionally added a dynamic visitors prioritization ruleset that prompts every time we see excessive requests per second (RPS) to make sure our programs can deal with the surge easily. Throughout these peaks, we aggressively deprioritize non-critical server-driven updates in order that our programs can dedicate sources to essentially the most time-sensitive computations. This strategy ensures easy efficiency and reliability when demand is at its highest.

Wanting Forward

After we got down to construct a seamlessly scalable scheduled viewing expertise, our objective was to create a dynamic and richer member expertise for stay content material. Widespread stay occasions just like the Crawford v. Canelo combat and the NFL Christmas video games actually put our programs to the take a look at. Alongside the best way, we additionally uncovered helpful learnings that proceed to form our work. Our makes an attempt to deprioritize visitors to different non-critical providers brought on surprising name patterns and spikes in visitors elsewhere. Equally, in hindsight, we additionally realized that the excessive visitors quantity from widespread occasions brought on extreme non-essential logging and was placing pointless stress on our ingestion pipelines.

None of this work would have been doable with out our gorgeous colleagues at Netflix who collaborated throughout a number of features to architect, construct, and take a look at these approaches, guaranteeing members can simply entry occasions on the proper second: UI Engineering, Cloud Gateway, Knowledge Science & Engineering, Search and Discovery, Proof Engineering, Member Expertise Foundations, Content material Promotion and Distribution, Operations and Reliability, System Playback, Expertise and Design and Product Administration.

As Netflix’s content material providing expands to incorporate new codecs like stay titles, free-to-air linear content material, and video games, we’re excited to construct on what we’ve achieved and look forward to much more potentialities. Our roadmap consists of extending the capabilities we developed for scheduled stay viewing to those rising codecs. We’re additionally targeted on enhancing our engineering tooling for better visibility into operations, message supply, and error dealing with to assist us proceed to ship the absolute best expertise for our members.

Be a part of Us for What’s Subsequent

We’re simply scratching the floor of what’s doable as we carry new stay experiences to members world wide. If you’re trying to clear up fascinating technical challenges in a novel tradition, then apply for a job that captures your curiosity.