12 hours in the past

Baolin Li, Lingyi Liu, Binh Tang, Shaojing Li

Introduction

Pre-training offers Massive Language Fashions (LLMs) broad linguistic capability and common world data, however post-training is the section that truly aligns them to concrete intents, area constraints, and the reliability necessities of manufacturing environments. At Netflix, we’re exploring how LLMs can allow new member experiences throughout suggestion, personalization, and search, which requires adapting generic basis fashions to allow them to higher replicate our catalog and the nuances of member interplay histories. At Netflix scale, post-training rapidly turns into an engineering downside as a lot as a modeling one: constructing and working advanced knowledge pipelines, coordinating distributed state throughout multi-node GPU clusters, and orchestrating workflows that interleave coaching and inference. This weblog describes the structure and engineering philosophy of our inner Put up-Coaching Framework, constructed by the AI Platform group to cover infrastructure complexity so researchers and mannequin builders can deal with mannequin innovation — not distributed methods plumbing.

A Mannequin Developer’s Put up-Coaching Journey

Put up-training typically begins deceptively merely: curate proprietary area knowledge, load an open-weight mannequin from Hugging Face, and iterate batches by way of it. On the experimentation scale, that’s a couple of traces of code. However when fine-tuning production-grade LLMs at scale, the hole between “operating a script” and “sturdy post-training” turns into an abyss of engineering edge instances.

Getting the information proper

On paper, post-training is simple: select a tokenizer, preprocess the dataset, and construct a dataloader. In apply, knowledge preparation is the place issues break. Excessive-quality post-training — instruction following, multi-turn dialogue, Chain-of-Thought — is determined by exactly controlling which tokens contribute to the loss. Hugging Face chat templates serialize conversations, however don’t specify what to coach on versus ignore. The pipeline should apply express loss masking so solely assistant tokens are optimized; in any other case the mannequin learns from prompts and different non-target textual content, degrading high quality.

Variable sequence size is one other pitfall. Padding inside a batch can waste compute, and uneven shapes throughout FSDP employees could cause GPU synchronization overhead. A extra GPU-efficient strategy is to pack a number of samples into fixed-length sequences and use a “doc masks” to stop cross-attention throughout samples, lowering padding and maintaining shapes constant.

Organising the mannequin

Loading an open-source checkpoint sounds easy till the mannequin not matches on one GPU. At that time you want a sharding technique (e.g., FSDP, TP) and should load partial weights straight onto the machine mesh to keep away from ever materializing the total mannequin on a single machine.

After loading, you continue to must make the mannequin trainable: select full fine-tuning vs. LoRA, and apply optimizations like activation checkpointing, compilation, and proper precision settings (typically delicate for RL, the place rollout and coverage precision should align). Massive vocabularies (>128k) add an additional reminiscence lure: logits are [batch, seq_len, vocab] and may spike peak reminiscence. Widespread mitigations embrace dropping ignored tokens earlier than projection and computing logits/loss in chunks alongside the sequence dimension.

Beginning the coaching

Even with knowledge and fashions prepared, manufacturing coaching is just not a easy “for loop”. The system should help all the pieces from SFT’s ahead/backward go to on-policy RL workflows that interleave rollout technology, reward/reference inference, and coverage updates.

At Netflix scale, coaching runs as a distributed job. We use Ray to orchestrate workflows by way of actors, decoupling modeling logic from {hardware}. Sturdy runs additionally require experiment monitoring (mannequin high quality metrics like loss and effectivity metrics like MFU) and fault tolerance by way of standardized checkpoints to renew cleanly after failures.

These challenges encourage a post-training framework that lets builders deal with modeling somewhat than distributed methods and operational particulars.

The Netflix Put up-Coaching Framework

We constructed Netflix’s LLM post-training framework so Netflix mannequin builders can flip concepts like these in Determine 1 into scalable, sturdy coaching jobs. It addresses the engineering hurdles described above, and likewise constraints which can be particular to the Netflix ecosystem. Current instruments (e.g., Considering Machines’ Tinker) work nicely for normal chat and instruction-tuning, however their construction can restrict deeper experimentation. In distinction, our inner use instances typically require architectural variation (for instance, customizing output projection heads for task-specific targets), expanded or nonstandard vocabularies pushed by semantic IDs or particular tokens, and even transformer fashions pre-trained from scratch on domain-specific, non-natural-language sequences. Supporting this vary requires a framework that prioritizes flexibility and extensibility over a hard and fast fine-tuning paradigm.

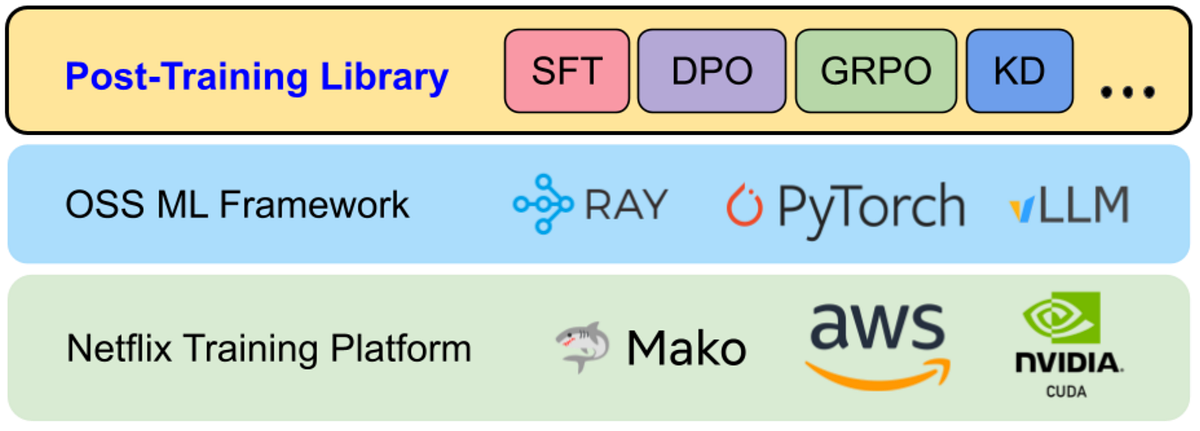

Determine 2 exhibits the end-to-end stack from infrastructure to skilled fashions. On the base is Mako, Netflix’s inner ML compute platform, which provisions GPUs on AWS. On high of Mako, we run sturdy open-source parts — PyTorch, Ray, and vLLM — largely out of the field. Our post-training framework sits above these foundations as a library: it offers reusable utilities and standardized coaching recipes for widespread workflows comparable to Supervised High quality-Tuning (SFT), Direct Choice Optimization (DPO), Reinforcement Studying (RL), and Data Distillation. Customers usually categorical jobs as configuration information that choose a recipe and plug in task-specific parts.

Determine 3 summarizes the modular parts we constructed to cut back complexity throughout 4 dimensions. As with most ML methods, coaching success hinges on three pillars — Knowledge, Mannequin, and Compute — and the rise of RL fine-tuning provides a fourth pillar: Workflow, to help multi-stage execution patterns that don’t match a easy coaching loop. Under, we element the precise abstractions and options the framework offers for every of those dimensions:

- Knowledge: Dataset abstractions for SFT, reward modeling, and RL; high-throughput streaming from cloud and disk for datasets that exceed native storage; and asynchronous, on-the-fly sequence packing to overlap CPU-heavy packing with GPU execution and scale back idle time.

- Mannequin: Assist for contemporary architectures (e.g., Qwen3, Gemma3) and Combination-of-Specialists variants (e.g., Qwen3 MoE, GPT-OSS); LoRA built-in into mannequin definitions; and high-level sharding APIs so builders can distribute giant fashions throughout machine meshes with out writing low-level distributed code.

- Compute: A unified job submission interface that scales from a single node to tons of of GPUs; MFU (Mannequin FLOPS Utilization) monitoring that is still correct beneath customized architectures and LoRA; and complete checkpointing (states of skilled parameters, optimizer, dataloader, knowledge mixer, and so forth.) to allow actual resumption after interruptions.

- Workflow: Assist for coaching paradigms past SFT, together with advanced on-line RL. Particularly, we lengthen Single Program, A number of Knowledge (SPMD) fashion SFT workloads to run on-line RL with a hybrid single-controller + SPMD execution mannequin, which we’ll describe subsequent.

In the present day, this framework helps analysis use instances starting from post-training large-scale basis fashions to fine-tuning specialised knowledgeable fashions. By standardizing these workflows, we’ve lowered the barrier for groups to experiment with superior methods and iterate extra rapidly.

Learnings from Constructing the Put up-Coaching Framework

Constructing a system of this scope wasn’t a linear implementation train. It meant monitoring a fast-moving open-source ecosystem, chasing down failure modes that solely seem beneath distributed load, and repeatedly revisiting architectural selections because the post-training frontier shifted. Under are three engineering learnings and finest practices that formed the framework.

Scaling from SFT to RL

We initially designed the library round Supervised High quality-Tuning (SFT): comparatively static knowledge circulation, a single coaching loop, and a Single Program, A number of Knowledge (SPMD) execution mannequin. That assumption stopped holding in 2025. With DeepSeek-R1 and the broader adoption of environment friendly on-policy RL strategies like GRPO, SFT grew to become desk stakes somewhat than the end line. Staying near the frontier required infrastructure that would transfer from “offline coaching loop” to “multi-stage, on-policy orchestration.”

Get Netflix Know-how Weblog’s tales in your inbox

Be part of Medium without cost to get updates from this author.

SFT’s studying sign is dense and speedy: for every token place we compute logits over the total vocabulary and backpropagate a differentiable loss. Infrastructure-wise, this seems to be loads like pre-training and maps cleanly to SPMD — each GPU employee runs the identical step operate over a unique shard of information, synchronizing by way of Pytorch distributed primitives.

On-policy RL adjustments the form of the system. The training sign is usually sparse and delayed (e.g., a scalar reward on the finish of an episode), and the coaching step is determined by knowledge generated by the present coverage. Particular person sub-stages — coverage updates, rollout technology, reference mannequin inference, reward mannequin scoring — can every be applied as SPMD workloads, however the end-to-end algorithm wants express coordination: you’re continuously handing off artifacts (prompts, sampled trajectories, rewards, benefits) throughout phases and synchronizing their lifecycle.

In our authentic SFT structure, the motive force node was deliberately “skinny”: it launched N an identical Ray actors, every encapsulating the total coaching loop, and scaling meant launching extra an identical employees. That mannequin breaks down for RL. RL required us to decompose the system into distinct roles — Coverage, Rollout Staff, Reward Mannequin, Reference Mannequin, and so forth. — and evolve the motive force into an energetic controller that encodes the management aircraft: when to generate rollouts, find out how to batch and rating them, when to set off optimization, and find out how to handle cluster sources throughout phases.

Determine 4 highlights this shift. So as to add RL help with out reinventing distributed orchestration from scratch, we built-in the core infrastructure from the open-source Verl library to handle Ray actor lifecycle and GPU useful resource allocation. Leveraging Verl’s backend allow us to deal with the “modeling floor space” — our Knowledge/Mannequin/Compute abstractions and inner optimizations — whereas maintaining orchestration considerations decoupled. The result’s a hybrid design: a unified person interface the place builders can transfer between SFT and RL workflows with out adopting a completely completely different psychological mannequin or API set.

Hugging Face-Centric Expertise

The Hugging Face Hub has successfully develop into the default distribution channel for open-weight LLMs, tokenizers, and configs. We designed the framework to remain near that ecosystem somewhat than creating an remoted inner customary. Even after we use optimized inner mannequin representations for pace, we load and save checkpoints in customary Hugging Face codecs. This avoids “walled backyard” friction and lets groups pull in new architectures, weights, and tokenizers rapidly.

This philosophy additionally formed our tokenizer story. Early on, we certain on to low-level tokenization libraries (e.g., SentencePiece, tiktoken) to maximise management. In apply, that created a pricey failure mode: silent coaching–serving skew. Our inference stack (vLLM) defaults to Hugging Face AutoTokenizer, and tiny variations in normalization, particular token dealing with, or chat templating can yield completely different token boundaries — precisely the form of mismatch that exhibits up later as inexplicable high quality regressions. We mounted this by making Hugging Face AutoTokenizer the one supply of reality. We then constructed a skinny compatibility layer (BaseHFModelTokenizer) to deal with post-training wants — setting padding tokens, injecting technology markers to help loss masking, and managing particular tokens / semantic IDs — whereas guaranteeing the byte-level tokenization path matches manufacturing.

We do take a unique strategy for mannequin implementations. Moderately than coaching straight on transformers mannequin lessons, we keep our personal optimized, unified mannequin definitions that may nonetheless load/save Hugging Face checkpoints. This layer is what permits framework-level optimizations — e.g., FlexAttention, memory-efficient chunked cross-entropy, constant MFU accounting, and uniform LoRA extensibility — with out re-implementing them individually for each mannequin household. A unified module naming conference additionally makes it possible to programmatically find and swap parts (Consideration, MLP, output heads) throughout architectures, and offers a constant floor for Tensor Parallelism and FSDP wrapping insurance policies.

The trade-off is evident: supporting a brand new mannequin household requires constructing a bridge between the Hugging Face reference implementation and our inner definition. To scale back that overhead, we use AI coding brokers to automate a lot of the conversion work, with a strict logit verifier because the gate: given random inputs, our inner mannequin should match the Hugging Face logits inside tolerance. As a result of the acceptance criterion is mechanically checkable, brokers can iterate autonomously till the implementation is right, dramatically shortening the time-to-support for brand new architectures.

In the present day, this design means we will solely prepare architectures we explicitly help — an intentional constraint shared by different high-performance methods like vLLM, SGLang, and torchtitan. To broaden protection, we plan so as to add a fallback Hugging Face backend, much like the compatibility patterns these tasks use: customers will have the ability to run coaching straight on native transformers fashions for speedy exploration of novel architectures, with the understanding that some framework optimizations and options might not apply in that mode.

Offering Differential Worth

A post-training framework is just value proudly owning if it delivers clear worth past assembling OSS parts. We construct on open supply for velocity, however we make investments closely the place off-the-shelf instruments are typically weakest: efficiency tuned to our workload traits, and integration with Netflix-specific mannequin and enterprise necessities. Listed here are some concrete examples:

First, we optimize coaching effectivity for our actual use instances. A consultant instance is excessive variance in sequence size. In FSDP-style coaching, long-tail sequences create stragglers: quicker employees find yourself ready at synchronization factors for the slowest batch, decreasing utilization. Customary bin-packing approaches assist, however doing them offline at our knowledge scale can add substantial preprocessing latency and make it more durable to maintain datasets contemporary. As an alternative, we constructed on-the-fly sequence packing that streams samples from storage and dynamically packs them in reminiscence. Packing runs asynchronously, overlapping CPU work with GPU compute. Determine 5 exhibits the affect: for our most skewed dataset, on-the-fly packing improved the efficient token throughput by as much as 4.7x.

We additionally encountered subtler efficiency cliffs round vocabulary growth. Our workloads continuously add customized tokens and semantic IDs. We discovered that sure vocabulary sizes may trigger the language mannequin head to fall again from a extremely optimized cuBLAS kernel to a a lot slower CUTLASS path, tripling that layer’s execution time. The framework now routinely pads vocabulary sizes to multiples of 64 so the compiler selects the quick kernel, preserving throughput with out requiring builders to know these low-level constraints.

Second, proudly owning the framework lets us help “non-standard” transformer use instances that generic LLM tooling not often targets. For instance, some inner fashions are skilled on member interplay occasion sequences somewhat than pure language, and will require bespoke RL loops that combine with highly-customized inference engines and optimize business-defined metrics. These workflows demand customized environments, reward computation, and orchestration patterns — whereas nonetheless needing the identical underlying ensures round efficiency, monitoring, and fault tolerance. The framework is constructed to accommodate these specialised necessities with out fragmenting into one-off pipelines, enabling speedy iteration.

Wrap up

Constructing the Netflix Put up-Coaching Framework has been a continuing train in balancing standardization with specialization. By staying anchored to the open-source ecosystem, we’ve averted drifting right into a proprietary stack that diverges from the place the group is shifting. On the similar time, by proudly owning the core abstractions round Knowledge, Mannequin, Compute, and Workflow, we’ve preserved the liberty to optimize for Netflix-scale coaching and Netflix-specific necessities.

Within the course of, we’ve moved post-training from a unfastened assortment of scripts right into a managed, scalable system. Whether or not the objective is maximizing SFT throughput, orchestrating multi-stage on-policy RL, or coaching transformers over member interplay sequences, the framework offers a constant set of primitives to take action reliably and effectively. As the sector shifts towards extra agentic, reasoning-heavy, and multimodal architectures, this basis will assist us translate new concepts into scalable GenAI prototypes — so experimentation is constrained by our creativeness, not by operational complexity.

Acknowledgements

This work builds on the momentum of the broader open-source ML group. We’re particularly grateful to the groups and contributors behind Torchtune, Torchtitan, and Verl, whose reference implementations and design patterns knowledgeable lots of our coaching framework selections — significantly round scalable coaching recipes, distributed execution, and RL-oriented orchestration. We additionally thank our associate groups in Netflix AI for Member Techniques for shut collaboration, suggestions, and shared problem-solving all through the event and rollout of the Put up-Coaching Framework, and the Coaching Platform group for offering the sturdy infrastructure and operational basis that makes large-scale post-training attainable.