Authors: Harshad Sane, Andrew Halaney

Think about this — you click on play on Netflix on a Friday night time and behind the scenes tons of of containers spring to motion in just a few seconds to reply your name. At Netflix, scaling containers effectively is vital to delivering a seamless streaming expertise to thousands and thousands of members worldwide. To maintain up with responsiveness at this scale, we modernized our container runtime, solely to hit a stunning bottleneck: the CPU structure itself.

Allow us to stroll you thru the story of how we identified the issue and what we discovered about scaling containers on the {hardware} degree.

The Drawback

When software demand requires that we scale up our servers, we get a brand new occasion from AWS. To make use of this new capability effectively, pods are assigned to the node till its sources are thought-about totally allotted. A node can go from no purposes operating to being maxed out inside moments of being able to obtain these purposes.

As we migrated increasingly from our outdated container platform to our new container platform, we began seeing some regarding traits. Some nodes have been stalling for lengthy durations of time, with a easy well being verify timing out after 30 seconds. An preliminary investigation confirmed that the mount desk size was rising dramatically in these conditions, and studying it alone may take upwards of 30 seconds. Taking a look at systemd’s stack it was clear that it was busy processing these mount occasions as effectively and will result in full system lockup. Kubelet additionally timed out incessantly speaking to containerd on this interval. Inspecting the mount desk made it clear that these mounts have been associated to container creation.

The affected nodes have been nearly all r5.steel cases, and have been beginning purposes whose container picture contained many layers (50+).

Problem

Mount Lock Rivalry

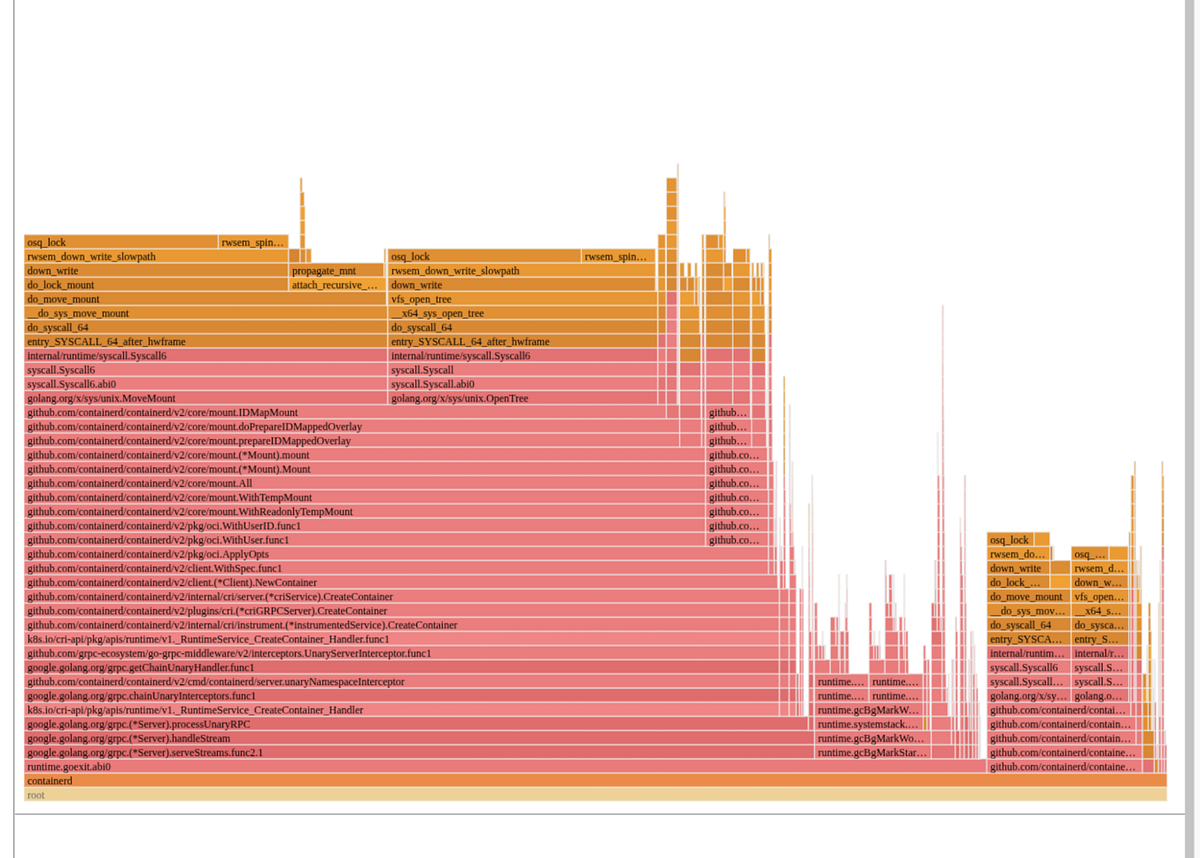

The flamegraph in Determine 1 clearly exhibits the place containerd spent its time. Virtually the entire time is spent attempting to seize a kernel-level lock as a part of the varied mount-related actions when assembling the container’s root filesystem!

Wanting nearer, containerd executes the next requires every layer if utilizing consumer namespaces:

- open_tree() to get a reference to the layer / listing

- mount_setattr() to set the idmap to match the container’s consumer vary, shifting the possession so this container can entry the recordsdata

- move_mount() to create a bind mount on the host with this new idmap utilized

These bind mounts are owned by the container’s consumer vary and are then used because the lowerdirs to create the overlayfs-based rootfs for the container. As soon as the overlayfs rootfs is mounted, the bind mounts are then unmounted since they aren’t essential to preserve round as soon as the overlayfs is constructed.

If a node is beginning many containers without delay, each CPU finally ends up busy attempting to execute these mounts and umounts. The kernel VFS has varied international locks associated to the mount desk, and every of those mounts requires taking that lock as we will see within the prime of the flamegraph. Any system attempting to shortly arrange many containers is susceptible to this, and it is a operate of the variety of layers within the container picture.

For instance, assume a node is beginning 100 containers, every with 50 layers in its picture. Every container will want 50 bind mounts to do the idmap for every layer. The container’s overlayfs mount shall be created utilizing these bind mounts because the decrease directories, after which all 50 bind mounts could be cleaned up through umount. Containerd really goes by this course of twice, as soon as to find out some consumer data within the picture and as soon as to create the precise rootfs. This implies the full variety of mount operations on the beginning up path for our 100 containers is 100 * 2 * (1 + 50 + 50) = 20200 mounts, all of which require grabbing varied international mount associated locks!

Analysis

What’s Completely different In The New Runtime?

As alluded to within the introduction, Netflix has been present process a modernization of its container runtime. Up to now a digital kubelet + docker resolution was used, whereas now a kubelet + containerd resolution is getting used. Each the outdated runtime and the brand new runtime used consumer namespaces, so what’s the distinction right here?

- Outdated Runtime:

All containers shared a single host consumer vary. UIDs in picture layers have been shifted at untar time, so file permissions matched when containers accessed recordsdata. This labored as a result of all containers used the identical host consumer. - New Runtime:

Every container will get a novel host consumer vary, bettering safety — if a container escapes, it will probably solely have an effect on its personal recordsdata. To keep away from the pricey technique of untarring and shifting UIDs for each container, the brand new runtime makes use of the kernel’s idmap function. This enables environment friendly UID mapping per container with out copying or altering file possession, which is why containerd performs many mounts.

Determine 2 beneath is a simplified instance of how this idmap function seems to be like:

Why Does Occasion Sort Matter?

As famous earlier, the difficulty was predominantly occurring on r5.steel cases. As soon as we recognized the basis subject we may simply reproduce by making a container picture with many layers and sending tons of of workloads utilizing the picture to a take a look at node.

Get Netflix Know-how Weblog’s tales in your inbox

Be a part of Medium at no cost to get updates from this author.

To higher perceive why this bottleneck was extra profound on some cases in comparison with others, we benchmarked container launches on totally different AWS occasion sorts:

- r5.steel (fifth gen Intel, dual-socket, a number of NUMA domains)

- m7i.metal-24xl (seventh gen Intel, single-socket, single NUMA area)

- m7a.24xlarge (seventh gen AMD, single-socket, single NUMA area)

Baseline Outcomes

Determine 3 exhibits the baseline outcomes from scaling containers on every occasion sort

- At low concurrency (≤ ~20 containers), all platforms carried out equally

- As concurrency elevated, r5.steel started to fail round 100 containers

- seventh era AWS cases maintained decrease launch instances and better success charges as concurrency grew

- m7a cases confirmed probably the most constant scaling conduct with the bottom failure charges even at excessive concurrency

Deep Dive

Utilizing perf file and customized microbenchmarks, we will see the most well liked code path was within the Linux kernel’s Digital Filesystem (VFS) path lookup code — particularly, a decent spin loop ready on a sequence lock in path_init(). The CPU spent most of its time executing the pause instruction, indicating many threads have been spinning, ready for the worldwide lock, as proven within the disassembly snippet beneath

path_init():

…

mov mount_lock,%eax

take a look at $0x1,%al

je 7c

pause

…Utilizing Intel’s Topdown Microarchitecture Evaluation (TMA), we noticed:

- 95.5% of pipeline slots have been stalled on contested accesses (tma_contested_accesses).

- 57% of slots have been as a result of false sharing (a number of cores accessing the identical cache line).

- Cache line bouncing and lock rivalry have been the first culprits.

Given a excessive period of time being spent in contested accesses, the pure pondering from a perspective of {hardware} variations led to investigation of NUMA and Hyperthreading affect coming from the structure to this subset

NUMA Results

Non-Uniform Reminiscence Entry (NUMA) is a system design the place every processor has its personal native reminiscence for quicker entry however depends on an interconnect to entry the reminiscence hooked up to a distant processor. Launched within the Nineteen Nineties to enhance scalability in multiprocessor methods, NUMA boosts efficiency but in addition introduces greater latency when a CPU must entry reminiscence hooked up to a different processor. Determine 4 is an easy picture describing native vs distant entry patterns of a NUMA structure

AWS cases are available quite a lot of sizes and styles. To acquire the most important core rely, we examined the 2-socket fifth era steel cases (r5.steel), on which containers have been orchestrated by the titus agent. Fashionable dual-socket architectures implement NUMA design, resulting in quicker native however greater distant entry latencies. Though container orchestration can preserve locality, international locks can simply run into excessive latency results as a result of distant synchronization. So as to take a look at the affect of NUMA, we examined an AWS 48xl sized occasion with 2 NUMA nodes or sockets versus an AWS 24xl sized occasion, which represents a single NUMA node or socket. As seen from Determine 5, the additional hop introduces excessive latencies and therefore failures in a short time.

Hyperthreading Results

- Hyperthreading (HT): Disabling HT on m7i.metal-24xl (Intel) improved container launch latencies by 20–30% as seen in Determine 6, since hyperthreads compete for shared execution sources, worsening the lock rivalry. When hyperthreading is enabled, every bodily CPU core is cut up into two logical CPUs (hyperthreads) that share a lot of the core’s execution sources, comparable to caches, execution items, and reminiscence bandwidth. Whereas this may enhance throughput for workloads that aren’t totally using the core, it introduces vital challenges for workloads that rely closely on international locks. By disabling hyperthreading, every thread runs by itself bodily core, eliminating this competitors for shared sources between hyperthreads. Consequently, threads can purchase and launch international locks extra shortly, lowering total rivalry and bettering latency for operations that usually share underlying sources.

Why Does {Hardware} Structure Matter?

Centralized Cache Architectures

Some trendy server CPUs use a mesh-style interconnect to hyperlink cores and cache slices, with every intersection managing cache coherence for a subset of reminiscence addresses. In these designs, all communication passes by a central queueing construction, which might solely deal with one request for a given deal with at a time. When a worldwide lock (just like the mount lock) is beneath heavy rivalry, all atomic operations focusing on that lock are funneled by this single queue, inflicting requests to pile up and leading to reminiscence stalls and latency spikes.

In some well-known mesh-based architectures as proven in Determine 7 beneath, this central queue known as the “Desk of Requests” (TOR), and it will probably grow to be a stunning bottleneck when many threads are combating for a similar lock. When you’ve ever puzzled why sure CPUs appear to “pause for breath” beneath heavy rivalry, that is typically the offender.

Distributed Cache Architectures

Some trendy server CPUs use a distributed, chiplet-based structure (Determine 8), the place a number of core complexes, every with their very own native last-level cache — are linked through a high-speed interconnect material. In these designs, cache coherence is managed inside every core complicated, and visitors between complexes is dealt with by a scalable management material. Not like mesh-based architectures with centralized queueing buildings, this distributed strategy spreads rivalry throughout a number of domains, making extreme stalls from international lock rivalry much less possible. For these within the technical particulars, public documentation from main CPU distributors offers deeper perception into these distributed cache and chiplet designs.

Here’s a comparability of the identical workload run on m7i (centralized cache structure) vs m7a (distributed cache structure). Word that, so as to make it intently comparable, Hyperthreading (HT) was disabled on m7i, given earlier regression seen in Determine 6, and experiments have been run utilizing similar core counts. The end result clearly exhibits a reasonably constant distinction in efficiency of roughly 20% as proven in Determine 9

Microbenchmark Outcomes

To show the above principle associated to NUMA, HT and micro-architecture, we developed a small microbenchmark which principally invokes a given variety of threads that then spins on a globally contended lock. Working the benchmark at rising thread counts reveals the latency traits of the system beneath totally different situations. For instance, Determine 10 beneath is the microbenchmark outcomes with NUMA, HT and totally different microarchitectures.

Outcomes from this practice artificial benchmark (pause_bench) confirmed:

- On r5.steel, eliminating NUMA by solely utilizing a single socket considerably drops latency at excessive thread counts

- On m7i.metal-24xl, disabling hyperthreading additional improves scaling

- On m7a.24xlarge, efficiency scales the very best, demonstrating {that a} distributed cache structure handles cache-line rivalry on this case of worldwide locks extra gracefully.

Bettering Software program Structure

Whereas understanding the impacts of the {hardware} structure is necessary for assessing doable mitigations, the basis trigger right here is rivalry over a worldwide lock. Working with containerd upstream we got here to 2 doable options:

- Use the newer kernel mount API’s fsconfig() lowerdir+ assist to produce the idmap’ed lowerdirs as fd’s as an alternative of filesystem paths. This avoids the move_mount() syscall talked about prior which requires international locks to mount every layer to the mount desk

- Map the widespread mother or father listing of all of the layers. This makes the variety of mount operations go from O(n) to O(1) per container, the place n is the variety of layers within the picture

Since utilizing the newer API requires utilizing a brand new kernel, we opted to make the latter change to learn extra of the neighborhood. With that in place, not can we see containerd’s flamegraph being dominated by mount-related operations. The truth is, as seen in Determine 11 beneath we needed to spotlight them in purple beneath to see them in any respect!

Conclusion

Our journey migrating to a contemporary kubelet + containerd runtime at Netflix revealed simply how deeply intertwined software program and {hardware} structure could be when working at scale. Whereas kubelet/containerd’s utilization of distinctive container customers introduced vital safety good points, it additionally surfaced new bottlenecks rooted in kernel and CPU structure — significantly when launching tons of of many layered container photographs in parallel. Our investigation highlighted that not all {hardware} is created equal for this workload: centralized cache administration amplified cache rivalry whereas distributed cache design easily scaled beneath load.

Finally, the very best resolution mixed {hardware} consciousness with software program enhancements. For a right away mitigation we selected to route these workloads to CPU architectures that scaled higher beneath these situations. By altering the software program design to reduce per-layer mount operations, we eradicated the worldwide lock as a launch-time bottleneck — unlocking quicker, extra dependable scaling whatever the underlying CPU structure. This expertise underscores the significance of holistic efficiency engineering: understanding and optimizing each the software program stack and the {hardware} it runs on is vital to delivering seamless consumer experiences at Netflix scale.

We belief these insights will help others in navigating the evolving container ecosystem, reworking potential challenges into alternatives for constructing strong, high-performance platforms.