Trying to find the most effective AI fashions often results in a mixture of chatbots, picture turbines, and common AI instruments. But when your purpose is creating movies, that definition modifications quick. The strongest choices are now not nearly producing textual content or photographs. They’re about producing movement, understanding prompts deeply, and becoming into an actual artistic workflow.

That’s the place most lists fall quick. They deal with all synthetic intelligence apps as interchangeable, though video technology requires a very totally different stage of management. Issues like movement realism, scene consistency, image-to-video flexibility, and iteration velocity matter excess of generic output high quality.

In 2026, the panorama has shifted. New video technology fashions usually are not simply experimental instruments. They’re changing into core elements of how creators, entrepreneurs, and groups produce content material at scale. Current information from the Stanford AI Index Report highlights the speedy rise of multimodal AI fashions, signaling a transparent shift from text-based methods towards video and picture technology. From short-form vertical clips to cinematic sequences, the fashions that matter most are those that may truly transfer concepts ahead, not simply generate property in isolation.

The very best AI fashions for many creators in 2026 are those constructed for video technology, not simply textual content. Fashions like Veo 3, Sora 2, Kling, Hailuo, and Seedance stand out as a result of they deal with movement realistically, comply with prompts extra intently, and assist image-to-video AI workflows that match how trendy AI apps for creators are literally used.

This information focuses particularly on these fashions. Not the preferred AI instruments general, however the ones which might be genuinely helpful for video creation at present.

What does “greatest AI fashions” imply in case your purpose is video technology

The very best video technology fashions usually are not the identical because the strongest AI methods general. Whereas many AI instruments concentrate on textual content or photographs, video fashions are evaluated based mostly on movement realism, immediate accuracy, scene consistency, and the way simply they match into an actual modifying workflow.

When folks ask what’s the greatest AI, they’re usually serious about general-purpose instruments like chatbots or picture turbines. However these fashions usually are not constructed to deal with time-based content material. Video introduces a unique layer of complexity. Frames want to attach easily, motion must really feel pure, and outputs want to remain constant throughout sequences.

That’s why not all synthetic intelligence apps are helpful for creators working with video. A mannequin that generates robust photographs would possibly nonetheless battle with movement or break continuity between frames. Equally, a text-focused AI software would possibly produce nice prompts however fail to translate them into usable video outputs.

For video technology, the definition of “greatest” turns into way more particular. It comes right down to a mixture of things:

• How life like does the movement look

• How intently the mannequin follows prompts

• How nicely it handles text-to-video AI and image-to-video AI workflows

• How constant are scenes throughout clips

• How briskly are you able to iterate and refine outputs

• How nicely it suits right into a broader workflow with different AI instruments

That is additionally why many creators don’t depend on a single software anymore. They mix totally different AI video instruments for social media relying on the kind of content material they’re producing, from short-form clips to longer narrative movies.

When you consider video fashions via this lens, the panorama turns into a lot clearer. As a substitute of evaluating the whole lot beneath the identical class, you begin figuring out which fashions are literally constructed for video creation and which of them usually are not. That shift is what makes it simpler to decide on the proper instruments and keep away from losing time on fashions that look spectacular however don’t translate into usable outcomes.

How we evaluated the most effective AI fashions for video technology

The strongest video technology fashions usually are not outlined by recognition or hype. To determine which AI instruments and synthetic intelligence apps are literally helpful for creators, we evaluated them based mostly on how they carry out in actual video workflows, not remoted demos.

We targeted on a set of sensible standards that replicate how creators truly use these fashions:

• Output high quality: how detailed, sharp, and visually coherent the generated video appears to be like

• Immediate adherence: how precisely the mannequin follows directions, together with type, motion, and scene composition

• Realism and movement: how pure and constant motion seems throughout frames

• Picture to video flexibility: the power to show reference photographs into usable video sequences

• Velocity and iteration: how shortly you’ll be able to generate, take a look at, and refine outputs

• Workflow readiness: how simply the mannequin suits right into a broader creation course of alongside different AI instruments

These standards matter as a result of video technology isn’t just about producing a single clip. It’s about creating one thing you need to use, refine, and combine into a bigger content material pipeline.

By evaluating fashions via this lens, the main target shifts away from novelty and towards usability. The very best AI fashions are those that persistently ship outcomes that creators can construct on.

Finest AI fashions for video technology in 2026

For those who’re on the lookout for the most effective AI fashions for video technology in 2026, these are the names value taking note of proper now. The present panorama of AI instruments and synthetic intelligence apps is evolving shortly, however a small group of AI video technology instruments persistently stands out for his or her capacity to provide usable video, not simply spectacular demos.

The main fashions on this house are constructed to deal with movement, comply with prompts precisely, and assist workflows like text-to-video and image-to-video, which is what defines the most effective AI for video technology at present. They don’t seem to be simply producing clips. They assist creators transfer from concept to output quicker and with extra management.

In observe, creators not often depend on a single mannequin. As a substitute, they mix a number of AI instruments relying on the kind of video they’re creating. Completely different fashions excel at totally different duties, from cinematic technology to quick iteration to avatar-based content material. That’s why understanding every mannequin’s strengths issues greater than looking for a single “greatest” possibility.

Beneath is a breakdown of probably the most related video technology fashions at present, together with what they’re greatest at, the place they fall quick, and who they’re truly helpful for.

Veo 3

Use case: Finest for top realism and cinematic video technology

Why embody:

Google positions Veo as its most superior video technology mannequin, and Veo 3 displays that with robust movement realism, higher immediate interpretation, and improved scene consistency throughout frames. It helps each text-to-video and image-to-video workflows, together with vertical codecs and higher-quality outputs that make it appropriate for production-level content material.

What creators like:

• Very robust movement realism in comparison with most fashions

• Higher consistency throughout frames, particularly in longer clips

• Handles cinematic prompts and digital camera instructions extra precisely

• Produces outputs that really feel nearer to completed content material

The place it falls quick:

• Entry continues to be restricted in comparison with extra open instruments

• Slower technology instances, particularly for high-quality outputs

• Requires extra deliberate prompting to get the most effective outcomes

• Not splendid for quick iteration or fast social content material testing

Who it’s for: Creators and groups targeted on high-quality visible output, storytelling, and polished content material the place realism and management matter greater than velocity.

Sora 2

Use case: Finest for cinematic storytelling and prompt-driven video technology

Why embody: Sora 2 is designed to show detailed prompts into structured video sequences with robust scene composition and timing. It stands out for the way nicely it handles narrative circulation, digital camera motion, and multi-scene technology, making it probably the most superior fashions for concept-driven video creation.

What creators like:

• Robust capacity to translate detailed prompts into structured scenes

• Handles digital camera angles and transitions extra deliberately than most fashions

• Higher at producing multi-scene or narrative sequences

• Outputs really feel extra directed moderately than random

The place it falls quick:

• Much less suited to quick testing or fast iterations

• Requires well-structured prompts to get constant outcomes

• Restricted availability relying on entry

• Not splendid for short-form social content material workflows

Who it’s for: Creators targeted on storytelling, idea movies, and cinematic sequences the place construction and route matter greater than velocity.

Kling

Use case: Finest for clean movement and versatile technology modes

Why embody: Kling stands out for the way it handles motion throughout frames, making it one of many strongest fashions for dynamic scenes. It helps each text-to-video and image-to-video workflows and provides creators extra flexibility when experimenting with totally different kinds and codecs.

What creators like:

• Easy and pure movement in comparison with many different fashions

• Works nicely for action-heavy or movement-focused scenes

• Helps a number of enter sorts, together with textual content and pictures

• Extra versatile when testing totally different kinds and concepts

The place it falls quick:

• Output consistency can differ relying on immediate readability

• Typically requires a number of generations to refine outcomes

• Much less management over narrative construction in comparison with cinematic-focused fashions

• Visible high quality might be much less secure in advanced scenes

Who it’s for: Creators who prioritize motion, experimentation, and suppleness throughout various kinds of video content material.

Hailuo 2.3 Professional

Use case: Finest for quick iteration and speedy content material testing

Why embody: Hailuo 2.3 Professional is designed for velocity and suppleness, making it probably the most sensible fashions for creators who must generate and take a look at a number of concepts shortly. It helps each text-to-video and image-to-video workflows, with quicker turnaround instances that make it simpler to refine outputs with out lengthy delays.

What creators like:

• Sooner technology in comparison with most high-quality fashions

• Straightforward to check a number of prompts and variations shortly

• Helps each text-to-video and image-to-video inputs

• Helpful for early-stage ideation and content material testing

The place it falls quick:

• Output high quality is much less constant in comparison with realism-focused fashions

• Movement and element can differ throughout generations

• Much less management over advanced scenes or structured narratives

• Outputs usually require refinement earlier than closing use

Who it’s for: Creators who prioritize velocity, experimentation, and speedy iteration over polished closing output.

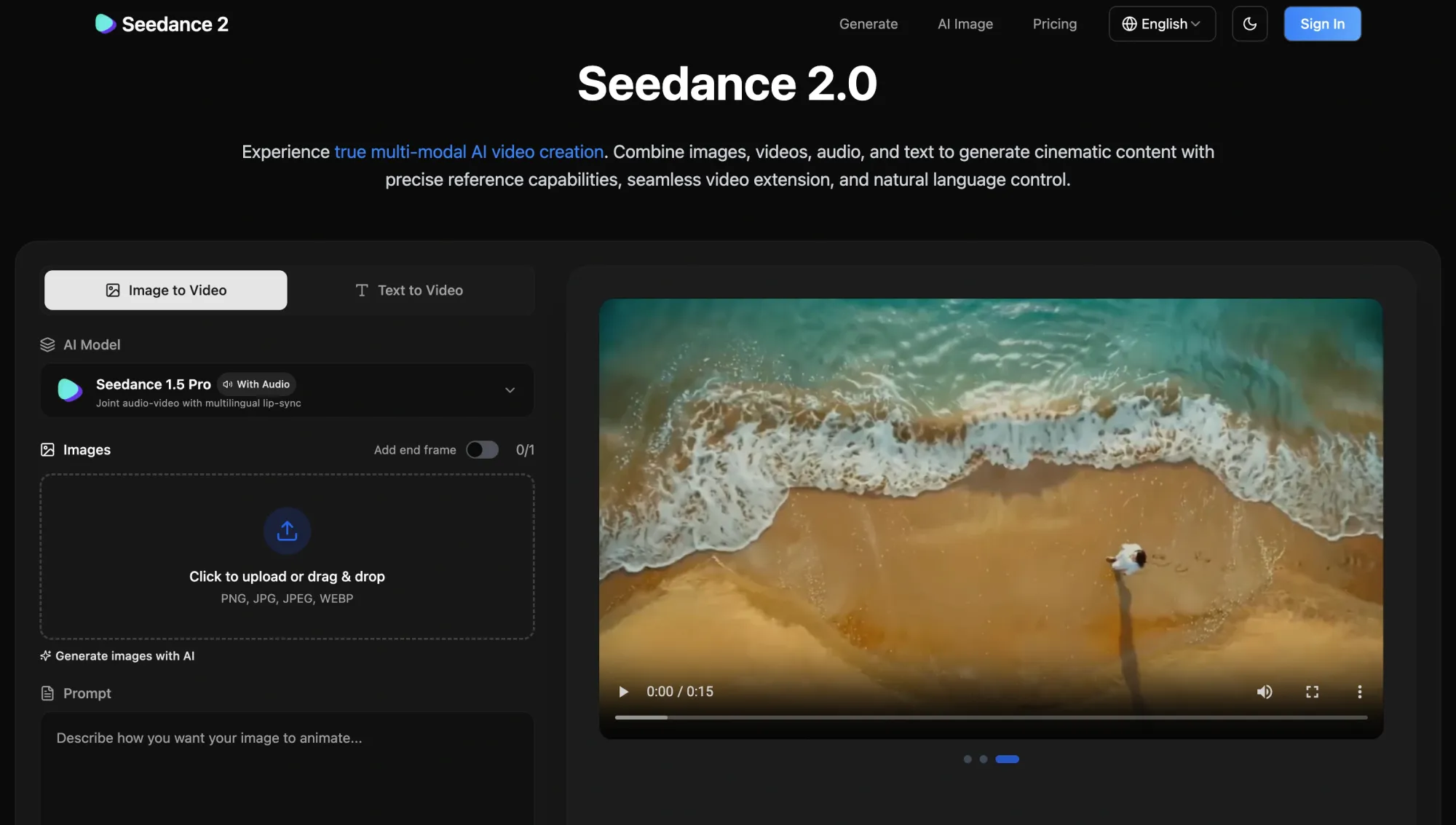

Seedance 1.5 Professional / Seedance 2.0

Use case: Finest for balanced text-to-video and image-to-video workflows

Why embody: Seedance fashions provide a versatile center floor between velocity and high quality, making them helpful throughout various kinds of video technology duties. They assist each text-to-video and image-to-video workflows and are sometimes used when creators need constant outcomes with out committing to a single specialised mannequin.

What creators like:

• Balanced efficiency throughout high quality, velocity, and suppleness

• Works nicely for each text-to-video and image-to-video inputs

• Extra predictable outputs in comparison with extremely experimental fashions

• Helpful for testing concepts with out switching instruments consistently

The place it falls quick:

• Doesn’t concentrate on one space, like realism or storytelling

• Output high quality can really feel common in comparison with top-tier fashions

• Much less superior management over cinematic scenes

• Not the quickest possibility for speedy iteration

Who it’s for: Creators who desire a dependable, versatile mannequin that works throughout a number of use circumstances with no need fixed switching.

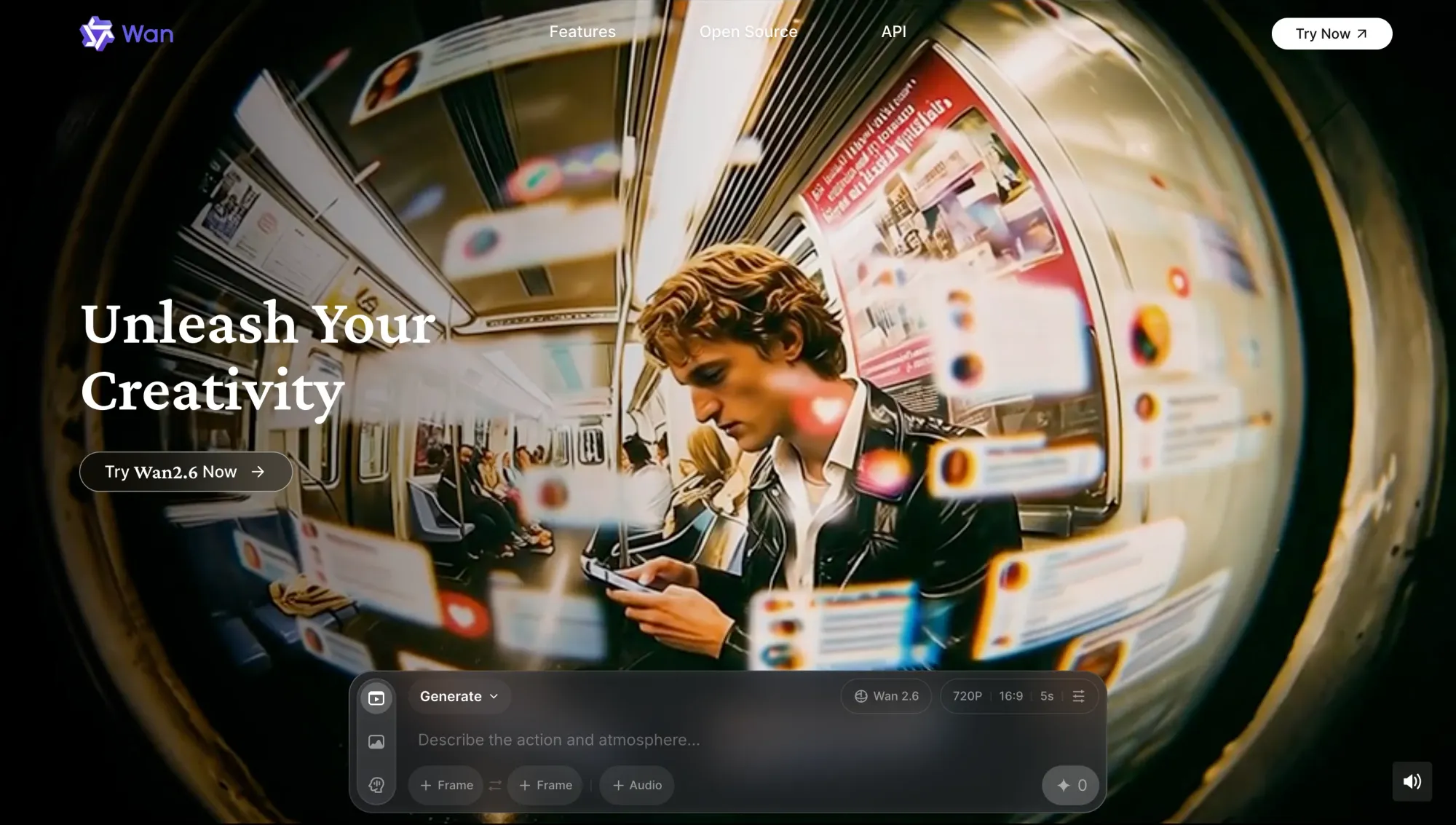

Wan 2.6

Use case: Finest for reference-based video technology and multi-input management

Why embody: Wan 2.6 stands out for its capacity to generate video based mostly on reference inputs, together with photographs and structured prompts. It provides creators extra management over how scenes evolve, making it helpful for tasks the place visible consistency and route matter throughout a number of clips.

What creators like:

• Robust assist for reference-based technology utilizing photographs

• Extra management over how scenes evolve throughout clips

• Helpful for sustaining visible consistency in sequences

• Works nicely for structured and repeatable workflows

The place it falls quick:

• Requires extra setup in comparison with less complicated prompt-based fashions

• Slower to make use of when testing fast concepts

• The interface and workflow can really feel much less intuitive

• Output high quality relies upon closely on enter high quality

Who it’s for: Creators who need extra management over inputs and consistency, particularly when working with references or structured visible ideas.

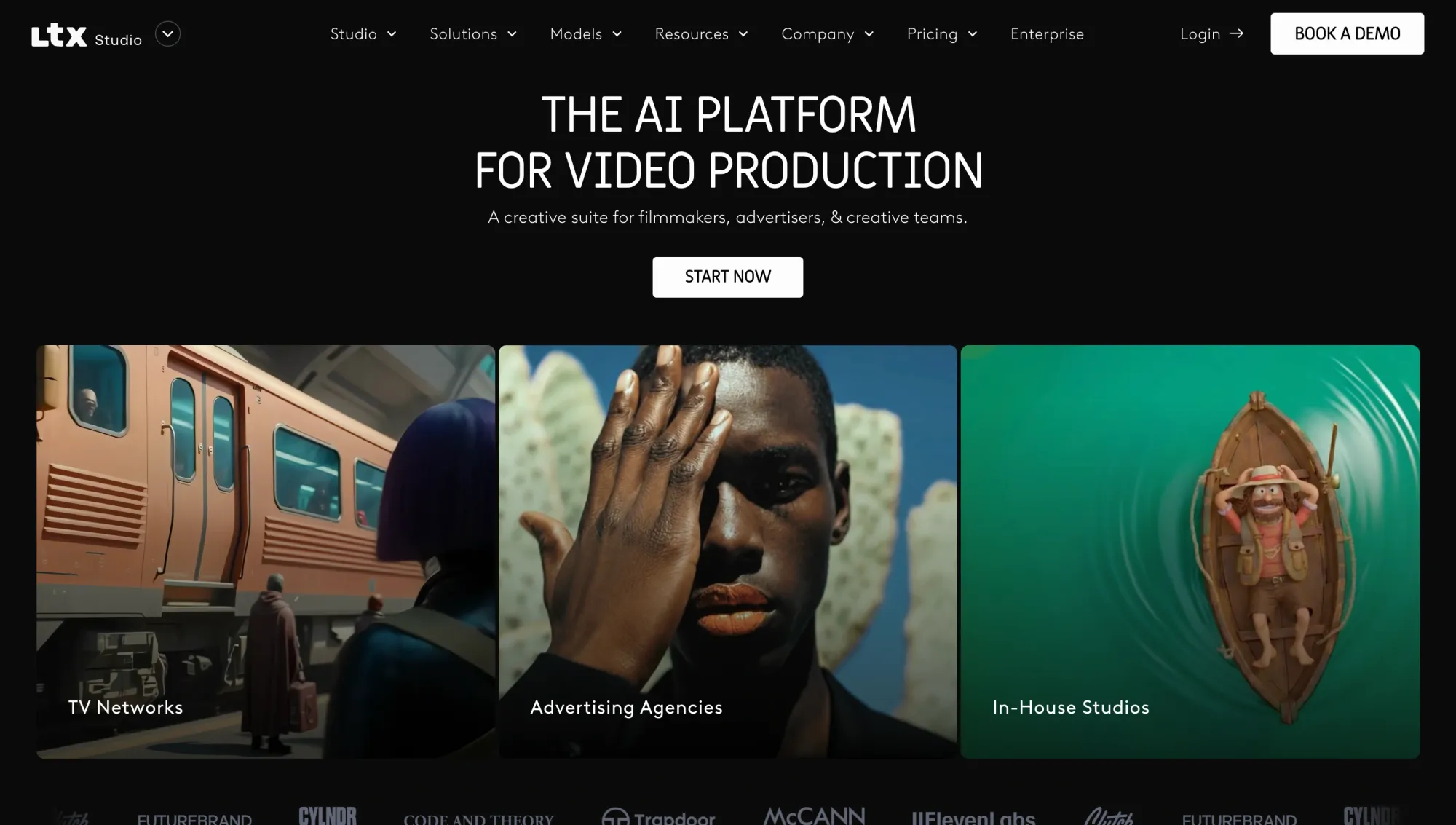

LTX 2.3

Use case: Finest for modifying, extending, and refining generated video

Why embody: LTX 2.3 is constructed round post-generation workflows, giving creators the power to increase clips, refine outputs, and iterate on present video as a substitute of ranging from scratch. It focuses extra on management and continuity, which makes it useful as soon as you have already got a base consequence.

What creators like:

• Means to increase and proceed present video clips

• Helpful for refining outputs as a substitute of regenerating the whole lot

• Helps preserve continuity throughout iterations

• Extra management over changes and small modifications

The place it falls quick:

• Not designed for preliminary video technology

• Requires a base output earlier than it turns into helpful

• Much less related for fast ideation workflows

• Can really feel slower in comparison with generation-first fashions

Who it’s for: Creators who wish to refine, prolong, and enhance present video outputs as a substitute of regularly regenerating new ones.

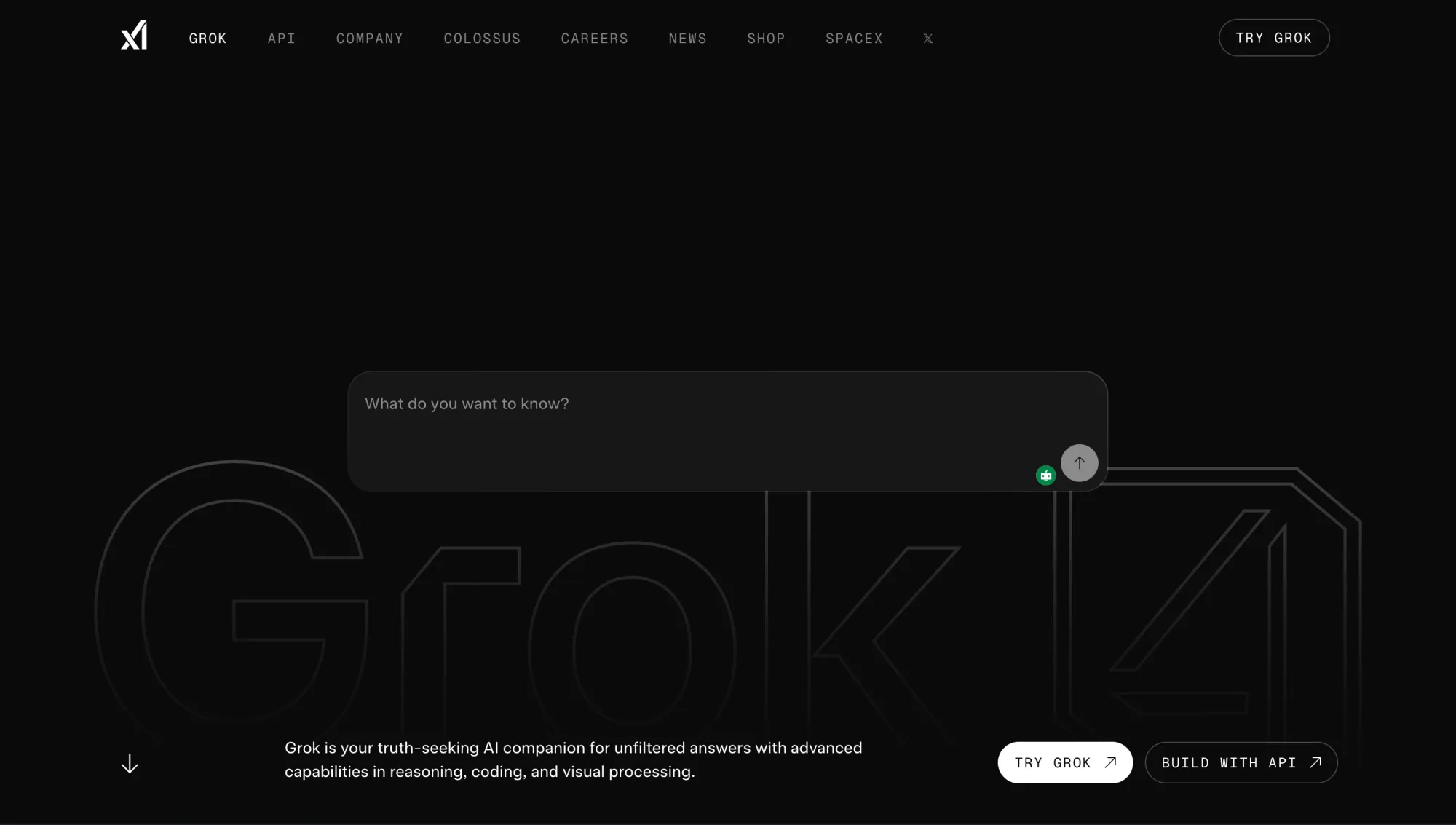

Grok Think about Video

Use case: Finest for experimental video technology and inventive exploration

Why embody: Grok Think about Video focuses on open-ended technology, permitting creators to experiment with concepts and not using a inflexible construction. It’s designed for exploration moderately than precision, making it helpful when testing ideas, kinds, or surprising instructions.

What creators like:

• Extra freedom to discover uncommon or artistic prompts

• Much less inflexible in comparison with extremely structured fashions

• Helpful for brainstorming visible ideas

• Can generate surprising and attention-grabbing outcomes

The place it falls quick:

• Decrease consistency in comparison with extra managed fashions

• Outputs can really feel unpredictable

• Restricted management over construction and continuity

• Not splendid for production-ready content material

Who it’s for: Creators who wish to experiment, discover concepts, and push artistic boundaries with out strict constraints.

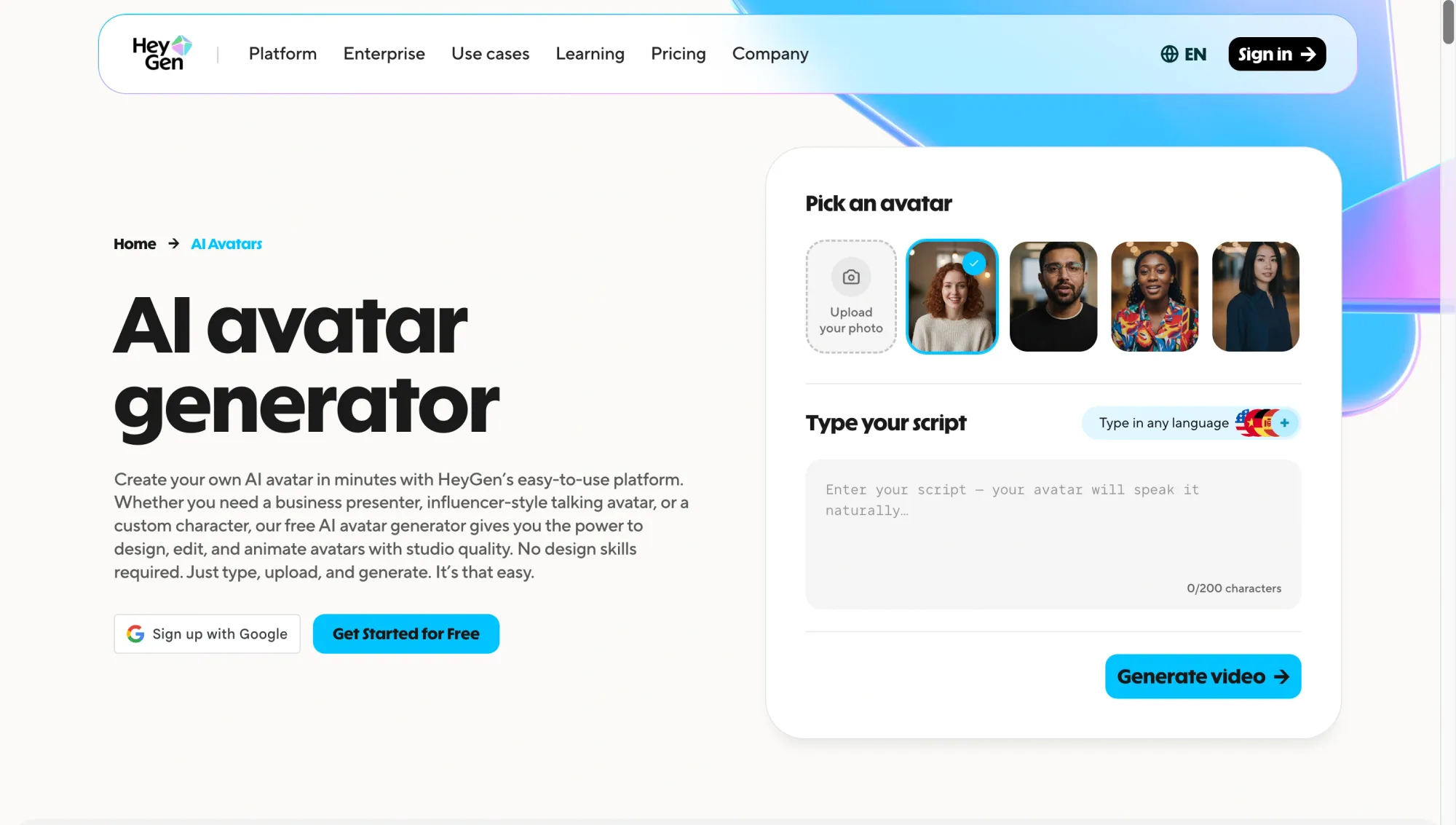

HeyGen Avatar 4

Use case: Finest for avatar-based video creation and talking-head content material

Why embody: HeyGen Avatar 4 focuses on producing movies with life like digital avatars that may communicate, current, and ship scripted content material. It’s constructed for communication-driven use circumstances moderately than cinematic technology, making it probably the most sensible instruments for scalable video manufacturing.

What creators like:

• Sensible avatars that may ship scripts naturally

• A quick method to produce talking-head movies with out filming

• Robust assist for multilingual content material and voice syncing

• Constant output throughout a number of movies

The place it falls quick:

• Restricted flexibility for cinematic or scene-based technology

• Outputs can really feel repetitive if overused

• Much less management over dynamic environments and movement

• Not suited to artistic or narrative video codecs

Who it’s for: Creators, entrepreneurs, and groups producing academic, promotional, or communication-driven movies at scale.

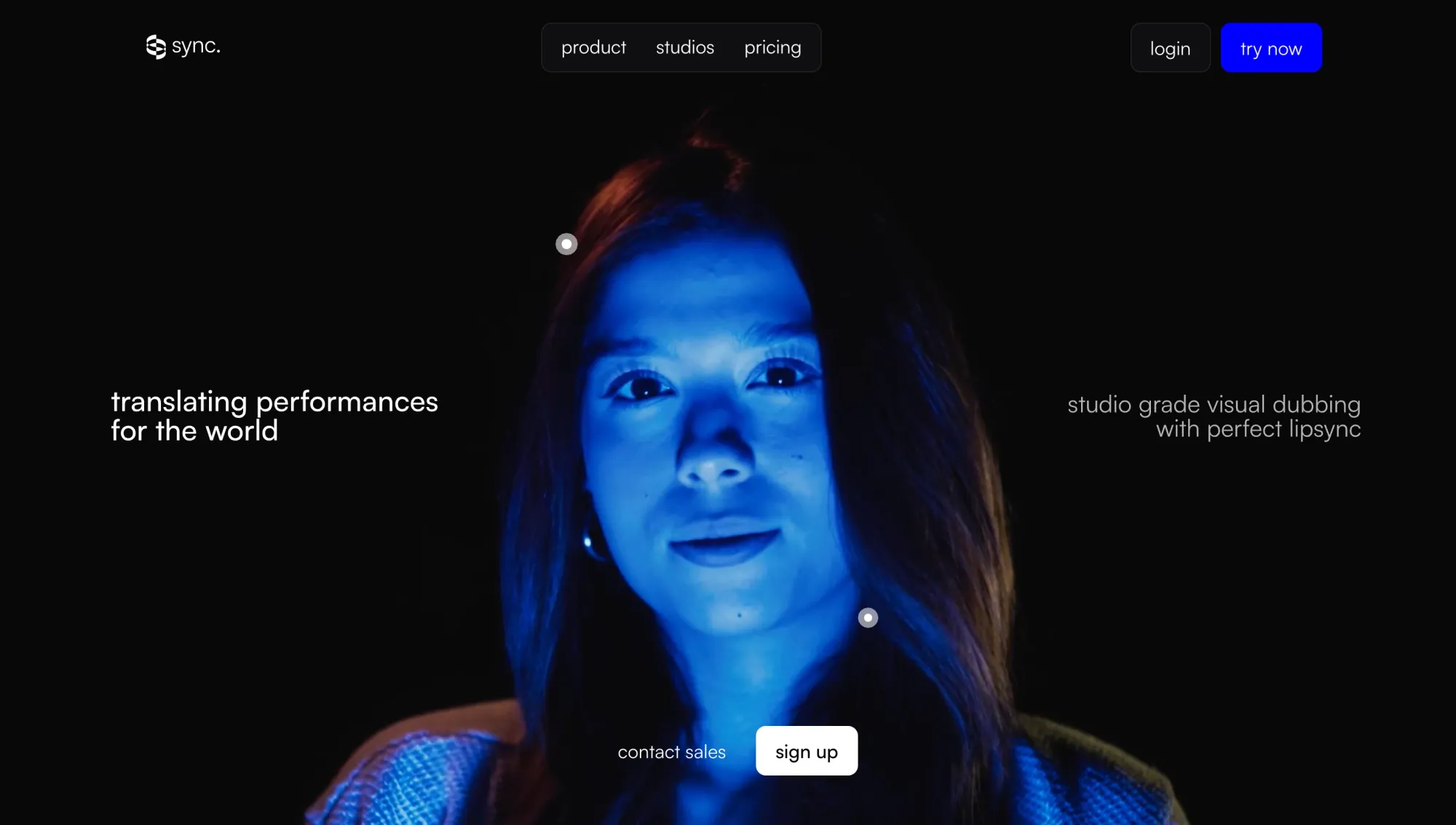

Sync LipSync v2

Use case: Finest for lip sync, dubbing, and localized video workflows

Why embody: Sync LipSync v2 focuses on aligning speech with video, making it simpler to adapt content material throughout languages and codecs. As a substitute of producing video from scratch, it enhances present footage by syncing dialogue precisely, which is crucial for localization and voice-driven content material.

What creators like:

• Correct lip sync that matches speech timing intently

• Helpful for dubbing and multilingual content material workflows

• Helps repurpose present movies as a substitute of recreating them

• Works nicely alongside different technology and modifying instruments

The place it falls quick:

• Doesn’t generate video content material by itself

• Requires present footage to be helpful

• Output high quality relies on the enter video and audio

• Restricted use outdoors of voice and dialogue workflows

Who it’s for: Creators and groups engaged on dubbing, localization, and dialogue-driven video content material throughout a number of languages.

Which AI video mannequin is greatest for every use case?

For those who’re nonetheless asking what’s the greatest AI, the reply relies upon totally on what you’re making an attempt to create. These fashions usually are not interchangeable. Every one is constructed for a unique sort of output, and understanding the place each performs greatest saves loads of time.

The identical applies when selecting the most effective AI video generator. The best alternative comes right down to the sort of video you wish to make, not which software is the preferred. Right here’s a fast breakdown of probably the most helpful AI instruments based mostly on actual use circumstances.

Finest AI mannequin for cinematic video high quality

In case your precedence is realism, storytelling, and structured scenes, these are the most effective AI fashions to start out with. Veo 3 is stronger on visible realism and movement consistency, whereas Sora 2 stands out for narrative circulation and prompt-driven route.

Finest AI mannequin for image-to-video

For turning photographs into dynamic video, these fashions provide probably the most flexibility. Kling handles movement particularly nicely, Veo 3 provides larger visible constancy, and Hailuo is helpful whenever you need quicker outcomes throughout a number of variations.

Finest AI mannequin for velocity and iteration

When velocity issues greater than perfection, these AI instruments are probably the most sensible. Hailuo and Seedance assist you take a look at concepts shortly, whereas LTX 2.3 turns into useful when refining and lengthening present clips with out restarting from scratch.

Finest AI mannequin for avatar movies

For talking-head content material, coaching movies, or scalable communication, HeyGen is likely one of the most dependable synthetic intelligence apps obtainable at present. It means that you can generate constant avatar-led movies with out filming, which is right for groups producing content material at scale.

Finest AI mannequin for lip sync and localization

In case your focus is dubbing, translation, or adapting movies throughout languages, this mannequin fills a crucial hole. It’s not a generator, nevertheless it enhances different AI instruments by making dialogue really feel pure and aligned throughout totally different variations of the identical video.

Finest AI mannequin for creators who need one workspace

For those who’re making an attempt to mix a number of AI instruments into one workflow, that is the place issues shift. As a substitute of selecting a single mannequin, many creators now work throughout a number of main fashions relying on the duty.

Async brings these fashions into one place, so you’ll be able to transfer between text-to-video, image-to-video, avatars, modifying, and extra with out switching platforms. If you wish to perceive how this works in observe, this breakdown of a chat-based AI mannequin in workflows explains how creators are beginning to use a number of fashions collectively.

Free AI apps and free AI applications value making an attempt for video creation

Free AI apps for video creation might be helpful, however solely inside the proper context. A lot of the prime video fashions usually are not totally obtainable at no cost, particularly on the stage of high quality wanted for constant output.

Many synthetic intelligence apps provide restricted entry via free tiers, credit, or trial-based utilization. That’s very true for the most effective synthetic intelligence apps for video, which frequently reserve stronger high quality, longer generations, or higher export choices for paid plans.

In observe, free AI applications are most helpful for:

• Testing totally different prompts and kinds

• Experimenting with text-to-video or image-to-video workflows

• Understanding how totally different fashions behave earlier than scaling manufacturing

The place they fall quick is in consistency, output high quality, and utilization limits. Free tiers usually limit decision, technology time, or the variety of exports, which makes them tougher to depend on for ongoing content material creation.

One other necessary issue is entry. Among the greatest AI fashions are solely obtainable via waitlists, credit, or bundled platforms moderately than totally open instruments. Which means the “greatest” possibility just isn’t all the time the one with the strongest mannequin however the one you’ll be able to truly use persistently.

The best strategy is to deal with free entry as a testing layer. Use it to discover totally different AI instruments, examine outputs, and determine which fashions suit your workflow. Then transfer right into a setup that helps quicker iteration and extra dependable outcomes.

Why the most effective AI fashions are much more helpful inside one workflow

These fashions are highly effective on their very own, however most creators don’t depend on a single mannequin from begin to end. Completely different AI instruments clear up totally different elements of the method, and switching between them is usually the place friction begins to construct.

One mannequin is likely to be higher for realism. One other is likely to be higher for image-to-video. A distinct one would possibly deal with avatars, lip sync, audio, and even upscaling and enhancement duties. Making an attempt to power one mannequin to deal with the whole lot often results in slower workflows and fewer constant outcomes.

That shift is precisely why extra creators are shifting towards multi-model workflows. As a substitute of asking which AI is greatest, the main target shifts to how totally different fashions can work collectively to provide higher outputs. McKinsey estimates that generative AI might add trillions of {dollars} in annual worth, with productiveness features relying closely on how organizations truly combine these methods into actual work.

In observe, a typical workflow would possibly seem like this:

• Generate a base scene utilizing one of many main video fashions

• Refine or prolong the clip utilizing one other mannequin

• Add voice, lip sync, or localization utilizing a separate software

• Alter format, timing, or construction earlier than closing output

The problem just isn’t entry to fashions anymore. It’s how simply these fashions can be utilized collectively. Leaping between disconnected synthetic intelligence apps creates delays, breaks momentum, and makes iteration tougher than it must be.

That’s the reason workflow design is changing into simply as necessary as mannequin high quality. The actual benefit comes from with the ability to transfer between fashions shortly, take a look at variations, and refine outputs with out consistently restarting or switching platforms.

Use Async to discover 100+ AI fashions for video technology in a single workspace

Discovering the most effective AI fashions is one factor. Truly utilizing them in a quick, constant workflow is one other.

You’ll most likely find yourself combining a number of AI instruments to get the consequence you need. One mannequin for technology, one other for refinement, and one other for avatars or voice. That’s often the way it performs out in observe, and it really works, however switching between platforms can shortly sluggish you down.

Async solves that by bringing video technology instruments and supporting fashions into one workspace. As a substitute of all the time having to maneuver backwards and forwards between AI apps, you’ll be able to generate, edit, refine, and finalize your content material in a single circulation.

Which means you’ll be able to transfer via totally different phases of creation with out breaking your rhythm. You’ll be able to generate clips from textual content or photographs, refine outputs, add avatars or voice, sync dialogue, and enhance high quality via enhancement and upscaling, all with out restarting your course of.

As a substitute of locking you into one mannequin, Async allows you to discover how totally different fashions behave in actual eventualities. You’ll be able to take a look at outputs throughout methods like Veo, Sora, Kling, Hailuo, Seedance, Wan, and LTX whereas additionally working with instruments for avatars, voice, and enhancement like HeyGen, ElevenLabs, and Topaz. This makes it simpler to match outcomes, iterate quicker, and construct a workflow that truly suits the way you create.

If you wish to see how this type of setup comes collectively, this information on constructing a content material creation workflow breaks down how creators construction multi-model methods in observe.

The benefit isn’t just accessing extra fashions. It’s what it allows you to do. You’ll be able to transfer from concept to output quicker, take a look at variations with out friction, and keep targeted on the artistic facet as a substitute of managing instruments.

FAQ

What are the most effective AI fashions for video technology in 2026?

The highest video technology fashions in 2026 embody Veo 3, Sora 2, Kling, Hailuo, and Seedance. Every one stands out for a unique motive. Veo and Sora are stronger for realism and storytelling, Kling excels at movement, Hailuo is healthier for velocity and testing, and Seedance provides a balanced strategy throughout totally different workflows. The best alternative relies on what you wish to create, not simply which mannequin is probably the most superior general.

What’s the greatest AI for making movies?

There isn’t a single reply to what’s the greatest AI for making movies is. It relies on your use case. If you need cinematic high quality, Veo or Sora are robust choices. For quicker iteration, Hailuo or Seedance works higher. For avatar-based content material, HeyGen is extra appropriate. And for localization or dubbing, instruments like Sync LipSync are important. In observe, most creators use a mixture of AI instruments as a substitute of counting on only one.

Are there any free AI apps for video technology?

Sure, there are free AI apps and free AI applications obtainable, however they often include limitations. The very best synthetic intelligence apps for video often have free tiers with restricted utilization, decrease output high quality, or restricted export choices. These are helpful for testing concepts or studying how totally different fashions work, however they’re not often sufficient for constant manufacturing. For those who’re planning to create movies repeatedly, you’ll probably want entry to extra superior options or a number of fashions.

What’s the distinction between AI instruments and AI fashions?

AI fashions are the underlying methods that generate content material, resembling textual content, photographs, or video. AI instruments are the platforms or interfaces that let you use these fashions. For instance, a video technology mannequin creates the output, whereas an AI video editor helps you refine, construction, or enhance that output as a part of your workflow.

Which AI mannequin is greatest for image-to-video?

The very best AI fashions for image-to-video embody Kling, Veo 3, and Hailuo. Kling is powerful for movement and suppleness, Veo delivers higher-quality visuals and consistency, and Hailuo is helpful for producing variations shortly. The most suitable choice relies on how a lot management, velocity, and high quality you want to your workflow.

Do I want one AI mannequin or a number of AI instruments?

Most often, you’ll want a number of AI instruments. Completely different fashions are constructed for various duties. One would possibly deal with technology, one other refinement, and one other voice or lip sync. Making an attempt to depend on a single mannequin often limits what you’ll be able to create. The best workflows mix a number of main fashions so you’ll be able to transfer quicker, take a look at concepts, and enhance outputs with out beginning over every time.