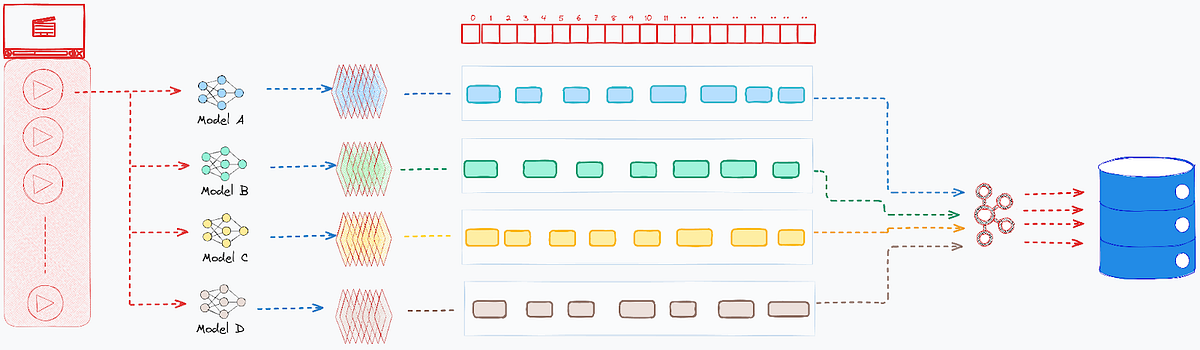

The Ingestion and Fusion Pipeline

To make sure system resilience and scalability, the transition from uncooked mannequin output to searchable intelligence follows a decoupled, three-stage course of:

1. Transactional Persistence

Uncooked annotations are ingested through high-availability pipelines and saved in our annotation service, which leverages Apache Cassandra for distributed storage. This stage strictly prioritizes information integrity and high-speed write throughput, guaranteeing that each piece of mannequin output is safely captured.

{

"sort": "SCENE_SEARCH",

"time_range": {

"start_time_ns": 4000000000,

"end_time_ns": 9000000000

},

"embedding_vector": [

-0.036, -0.33, -0.29 ...

],

"label": "kitchen",

"confidence_score": 0.72

}Determine 2: Pattern Scene Search Mannequin Annotation Output

2. Offline Information Fusion

As soon as the annotation service securely persists the uncooked information, the system publishes an occasion through Apache Kafka to set off an asynchronous processing job. Serving because the structure’s central logic layer, this offline pipeline handles the heavy computational lifting out-of-band. It performs exact temporal intersections, fusing overlapping annotations from disparate fashions into cohesive, unified information that empower advanced, multi-dimensional queries.

Cleanly decoupling these intensive processing duties from the ingestion pipeline ensures that advanced information intersections by no means bottleneck real-time consumption. Consequently, the system maintains most uptime and peak responsiveness, even when processing the large scale of the Netflix media catalog.

To attain this intersection at scale, the offline pipeline normalizes disparate mannequin outputs by mapping them into fixed-size temporal buckets (one-second intervals). This discretization course of unfolds in three steps:

- Bucket Mapping: Steady detections are segmented into discrete intervals. For instance, if a mannequin detects a personality “Joey” from seconds 2 by means of 8, the pipeline maps this steady span of frames into seven distinct one-second buckets.

- Annotation Intersection: When a number of fashions generate annotations for the very same temporal bucket, akin to character recognition “Joey” and scene detection “kitchen” overlapping in second 4, the system fuses them right into a single, complete file.

- Optimized Persistence: These newly enriched information are written again to Cassandra as distinct entities. This creates a extremely optimized, second-by-second index of multi-modal intersections, completely associating each fused annotation with its supply asset.

The next file reveals the overlap of the character “Joey” and scene “kitchen” annotations throughout a 4 to five second window in a video asset:

{

"associated_ids": {

"MOVIE_ID": "81686010",

"ASSET_ID": "01325120–7482–11ef-b66f-0eb58bc8a0ad"

},

"time_bucket_start_ns": 4000000000,

"time_bucket_end_ns": 5000000000,

"source_annotations": [

{

"annotation_id": "7f5959b4–5ec7–11f0-b475–122953903c43",

"annotation_type": "CHARACTER_SEARCH",

"label": "Joey",

"time_range": {

"start_time_ns": 2000000000,

"end_time_ns": 8000000000

}

},

{

"annotation_id": "c9d59338–842c-11f0–91de-12433798cf4d",

"annotation_type": "SCENE_SEARCH",

"time_range": {

"start_time_ns": 4000000000,

"end_time_ns": 9000000000

},

"label": "kitchen",

"embedding_vector": [

0.9001, 0.00123 ....

]

}

]

}Determine 4: Pattern Intersection File For Character + Scene Search

3. Indexing for Actual Time Search

As soon as the enriched temporal buckets are securely endured in Cassandra, a subsequent occasion triggers their ingestion into Elasticsearch.

To ensure absolute information consistency, the pipeline executes upsert operations utilizing a composite key (asset ID + time bucket) because the distinctive doc identifier. If a temporal bucket already exists for a particular second of video, maybe populated by an earlier mannequin run, the system intelligently updates the prevailing file somewhat than producing a replica. This mechanism establishes a single, unified supply of reality for each second of footage.

Get Netflix Expertise Weblog’s tales in your inbox

Be part of Medium without cost to get updates from this author.

Architecturally, the pipeline buildings every temporal bucket as a nested doc. The basis degree captures the overarching asset context, whereas related little one paperwork home the precise, multi-modal annotation information. This hierarchical information mannequin is exactly what empowers customers to execute extremely environment friendly, cross-annotation queries at scale.