Shyam Gala, Javier Fernandez-Ivern, Anup Rokkam Pratap, Devang Shah

A whole bunch of thousands and thousands of consumers tune into Netflix day-after-day, anticipating an uninterrupted and immersive streaming expertise. Behind the scenes, a myriad of techniques and providers are concerned in orchestrating the product expertise. These backend techniques are constantly being advanced and optimized to satisfy and exceed buyer and product expectations.

When endeavor system migrations, one of many most important challenges is establishing confidence and seamlessly transitioning the visitors to the upgraded structure with out adversely impacting the client expertise. This weblog collection will look at the instruments, methods, and methods now we have utilized to realize this purpose.

The backend for the streaming product makes use of a extremely distributed microservices structure; therefore these migrations additionally occur at totally different factors of the service name graph. It could possibly occur on an edge API system servicing buyer units, between the sting and mid-tier providers, or from mid-tiers to knowledge shops. One other related issue is that the migration might be occurring on APIs which are stateless and idempotent, or it might be occurring on stateful APIs.

We now have categorized the instruments and methods now we have used to facilitate these migrations in two high-level phases. The primary part entails validating purposeful correctness, scalability, and efficiency issues and making certain the brand new techniques’ resilience earlier than the migration. The second part entails migrating the visitors over to the brand new techniques in a fashion that mitigates the chance of incidents whereas regularly monitoring and confirming that we’re assembly essential metrics tracked at a number of ranges. These embody High quality-of-Expertise(QoE) measurements on the buyer machine degree, Service-Stage-Agreements (SLAs), and business-level Key-Efficiency-Indicators(KPIs).

This weblog put up will present an in depth evaluation of replay visitors testing, a flexible method now we have utilized within the preliminary validation part for a number of migration initiatives. In a follow-up weblog put up, we are going to give attention to the second part and look deeper at a number of the tactical steps that we use emigrate the visitors over in a managed method.

Replay visitors refers to manufacturing visitors that’s cloned and forked over to a unique path within the service name graph, permitting us to train new/up to date techniques in a fashion that simulates precise manufacturing circumstances. On this testing technique, we execute a replica (replay) of manufacturing visitors in opposition to a system’s current and new variations to carry out related validations. This method has a handful of advantages.

- Replay visitors testing allows sandboxed testing at scale with out considerably impacting manufacturing visitors or buyer expertise.

- Using cloned actual visitors, we will train the range of inputs from a big selection of units and machine software software program variations in manufacturing. That is significantly necessary for complicated APIs which have many excessive cardinality inputs. Replay visitors offers the attain and protection required to check the flexibility of the system to deal with occasionally used enter combos and edge circumstances.

- This method facilitates validation on a number of fronts. It permits us to claim purposeful correctness and offers a mechanism to load take a look at the system and tune the system and scaling parameters for optimum functioning.

- By simulating an actual manufacturing atmosphere, we will characterize system efficiency over an prolonged interval whereas contemplating the anticipated and surprising visitors sample shifts. It offers a superb learn on the supply and latency ranges underneath totally different manufacturing circumstances.

- Gives a platform to make sure that related operational insights, metrics, logging, and alerting are in place earlier than migration.

Replay Answer

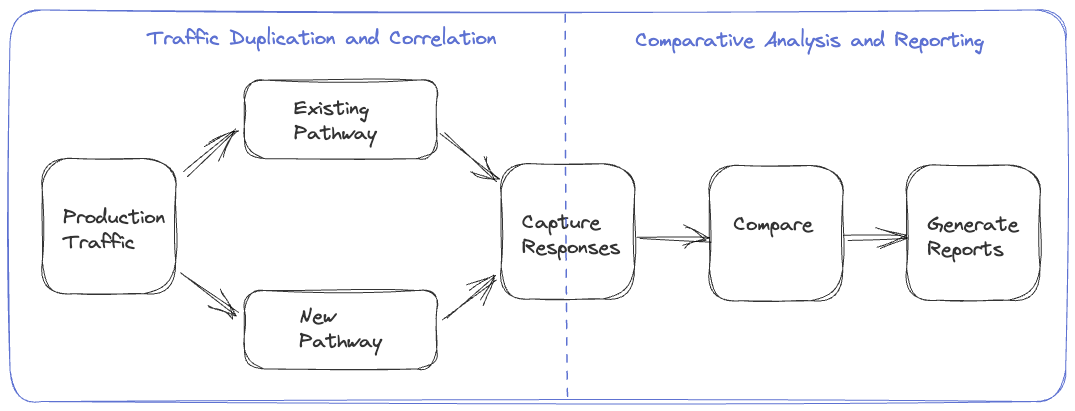

The replay visitors testing answer includes two important elements.

- Visitors Duplication and Correlation: The preliminary step requires the implementation of a mechanism to clone and fork manufacturing visitors to the newly established pathway, together with a course of to file and correlate responses from the unique and different routes.

- Comparative Evaluation and Reporting: Following visitors duplication and correlation, we want a framework to match and analyze the responses recorded from the 2 paths and get a complete report for the evaluation.

We now have tried totally different approaches for the visitors duplication and recording step by numerous migrations, making enhancements alongside the way in which. These embody choices the place replay visitors era is orchestrated on the machine, on the server, and by way of a devoted service. We are going to look at these alternate options within the upcoming sections.

Gadget Pushed

On this choice, the machine makes a request on the manufacturing path and the replay path, then discards the response on the replay path. These requests are executed in parallel to reduce any potential delay on the manufacturing path. The number of the replay path on the backend may be pushed by the URL the machine makes use of when making the request or by using particular request parameters in routing logic on the acceptable layer of the service name graph. The machine additionally features a distinctive identifier with equivalent values on each paths, which is used to correlate the manufacturing and replay responses. The responses may be recorded on the most optimum location within the service name graph or by the machine itself, relying on the actual migration.

The device-driven method’s apparent draw back is that we’re losing machine sources. There may be additionally a danger of impression on machine QoE, particularly on low-resource units. Including forking logic and complexity to the machine code can create dependencies on machine software launch cycles that typically run at a slower cadence than service launch cycles, resulting in bottlenecks within the migration. Furthermore, permitting the machine to execute untested server-side code paths can inadvertently expose an assault floor space for potential misuse.

Server Pushed

To handle the issues of the device-driven method, the opposite choice now we have used is to deal with the replay issues totally on the backend. The replay visitors is cloned and forked within the acceptable service upstream of the migrated service. The upstream service calls the present and new alternative providers concurrently to reduce any latency enhance on the manufacturing path. The upstream service information the responses on the 2 paths together with an identifier with a typical worth that’s used to correlate the responses. This recording operation can be carried out asynchronously to reduce any impression on the latency on the manufacturing path.

The server-driven method’s profit is that your entire complexity of replay logic is encapsulated on the backend, and there’s no wastage of machine sources. Additionally, since this logic resides on the server aspect, we will iterate on any required adjustments sooner. Nonetheless, we’re nonetheless inserting the replay-related logic alongside the manufacturing code that’s dealing with enterprise logic, which may end up in pointless coupling and complexity. There may be additionally an elevated danger that bugs within the replay logic have the potential to impression manufacturing code and metrics.

Devoted Service

The most recent method that now we have used is to fully isolate all elements of replay visitors right into a separate devoted service. On this method, we file the requests and responses for the service that must be up to date or changed to an offline occasion stream asynchronously. Very often, this logging of requests and responses is already occurring for operational insights. Subsequently, we use Mantis, a distributed stream processor, to seize these requests and responses and replay the requests in opposition to the brand new service or cluster whereas making any required changes to the requests. After replaying the requests, this devoted service additionally information the responses from the manufacturing and replay paths for offline evaluation.

This method centralizes the replay logic in an remoted, devoted code base. Aside from not consuming machine sources and never impacting machine QoE, this method additionally reduces any coupling between manufacturing enterprise logic and replay visitors logic on the backend. It additionally decouples any updates on the replay framework away from the machine and repair launch cycles.

Analyzing Replay Visitors

As soon as now we have run replay visitors and recorded a statistically vital quantity of responses, we’re prepared for the comparative evaluation and reporting part of replay visitors testing. Given the dimensions of the information being generated utilizing replay visitors, we file the responses from the 2 sides to a cheap chilly storage facility utilizing know-how like Apache Iceberg. We will then create offline distributed batch processing jobs to correlate & examine the responses throughout the manufacturing and replay paths and generate detailed stories on the evaluation.

Normalization

Relying on the character of the system being migrated, the responses would possibly want some preprocessing earlier than being in contrast. For instance, if some fields within the responses are timestamps, these will differ. Equally, if there are unsorted lists within the responses, it is likely to be finest to type them earlier than evaluating. In sure migration situations, there could also be intentional alterations to the response generated by the up to date service or part. As an example, a subject that was a listing within the authentic path is represented as key-value pairs within the new path. In such circumstances, we will apply particular transformations to the response on the replay path to simulate the anticipated adjustments. Primarily based on the system and the related responses, there is likely to be different particular normalizations that we would apply to the response earlier than we examine the responses.

Comparability

After normalizing, we diff the responses on the 2 sides and test whether or not now we have matching or mismatching responses. The batch job creates a high-level abstract that captures some key comparability metrics. These embody the entire variety of responses on either side, the rely of responses joined by the correlation identifier, matches and mismatches. The abstract additionally information the variety of passing/ failing responses on every path. This abstract offers a wonderful high-level view of the evaluation and the general match price throughout the manufacturing and replay paths. Moreover, for mismatches, we file the normalized and unnormalized responses from either side to a different huge knowledge desk together with different related parameters, such because the diff. We use this extra logging to debug and establish the basis reason for points driving the mismatches. As soon as we uncover and handle these points, we will use the replay testing course of iteratively to deliver down the mismatch share to a suitable quantity.

Lineage

When evaluating responses, a typical supply of noise arises from the utilization of non-deterministic or non-idempotent dependency knowledge for producing responses on the manufacturing and replay pathways. As an example, envision a response payload that delivers media streams for a playback session. The service liable for producing this payload consults a metadata service that gives all obtainable streams for the given title. Varied elements can result in the addition or removing of streams, resembling figuring out points with a particular stream, incorporating help for a brand new language, or introducing a brand new encode. Consequently, there’s a potential for discrepancies within the units of streams used to find out payloads on the manufacturing and replay paths, leading to divergent responses.

A complete abstract of knowledge variations or checksums for all dependencies concerned in producing a response, known as a lineage, is compiled to handle this problem. Discrepancies may be recognized and discarded by evaluating the lineage of each manufacturing and replay responses within the automated jobs analyzing the responses. This method mitigates the impression of noise and ensures correct and dependable comparisons between manufacturing and replay responses.

Evaluating Stay Visitors

An alternate technique to recording responses and performing the comparability offline is to carry out a stay comparability. On this method, we do the forking of the replay visitors on the upstream service as described within the `Server Pushed` part. The service that forks and clones the replay visitors straight compares the responses on the manufacturing and replay path and information related metrics. This feature is possible if the response payload isn’t very complicated, such that the comparability doesn’t considerably enhance latencies or if the providers being migrated should not on the crucial path. Logging is selective to circumstances the place the previous and new responses don’t match.

Load Testing

Apart from purposeful testing, replay visitors permits us to emphasize take a look at the up to date system elements. We will regulate the load on the replay path by controlling the quantity of visitors being replayed and the brand new service’s horizontal and vertical scale elements. This method permits us to judge the efficiency of the brand new providers underneath totally different visitors circumstances. We will see how the supply, latency, and different system efficiency metrics, resembling CPU consumption, reminiscence consumption, rubbish assortment price, and so forth, change because the load issue adjustments. Load testing the system utilizing this method permits us to establish efficiency hotspots utilizing precise manufacturing visitors profiles. It helps expose reminiscence leaks, deadlocks, caching points, and different system points. It allows the tuning of thread swimming pools, connection swimming pools, connection timeouts, and different configuration parameters. Additional, it helps within the dedication of cheap scaling insurance policies and estimates for the related value and the broader value/danger tradeoff.

Stateful Techniques

We now have also used replay testing to construct confidence in migrations involving stateless and idempotent techniques. Replay testing can even validate migrations involving stateful techniques, though extra measures should be taken. The manufacturing and replay paths should have distinct and remoted knowledge shops which are in equivalent states earlier than enabling the replay of visitors. Moreover, all totally different request sorts that drive the state machine should be replayed. Within the recording step, aside from the responses, we additionally need to seize the state related to that particular response. Correspondingly within the evaluation part, we need to examine each the response and the associated state within the state machine. Given the general complexity of utilizing replay testing with stateful techniques, now we have employed different methods in such situations. We are going to take a look at certainly one of them within the follow-up weblog put up on this collection.

We now have adopted replay visitors testing at Netflix for quite a few migration initiatives. A current instance concerned leveraging replay testing to validate an intensive re-architecture of the sting APIs that drive the playback part of our product. One other occasion included migrating a mid-tier service from REST to gRPC. In each circumstances, replay testing facilitated complete purposeful testing, load testing, and system tuning at scale utilizing actual manufacturing visitors. This method enabled us to establish elusive points and quickly construct confidence in these substantial redesigns.

Upon concluding replay testing, we’re prepared to start out introducing these adjustments in manufacturing. In an upcoming weblog put up, we are going to take a look at a number of the methods we use to roll out vital adjustments to the system to manufacturing in a gradual risk-controlled manner whereas constructing confidence by way of metrics at totally different ranges.