Shyam Gala, Javier Fernandez-Ivern, Anup Rokkam Pratap, Devang Shah

Image your self enthralled by the most recent episode of the one that you love Netflix collection, delighting in an uninterrupted, high-definition streaming expertise. Behind these good moments of leisure is a fancy mechanism, with quite a few gears and cogs working in concord. However what occurs when this equipment wants a metamorphosis? That is the place large-scale system migrations come into play. Our earlier weblog publish offered replay visitors testing — an important instrument in our toolkit that permits us to implement these transformations with precision and reliability.

Replay visitors testing provides us the preliminary basis of validation, however as our migration course of unfolds, we’re met with the necessity for a fastidiously managed migration course of. A course of that doesn’t simply decrease danger, but in addition facilitates a steady analysis of the rollout’s affect. This weblog publish will delve into the strategies leveraged at Netflix to introduce these adjustments to manufacturing.

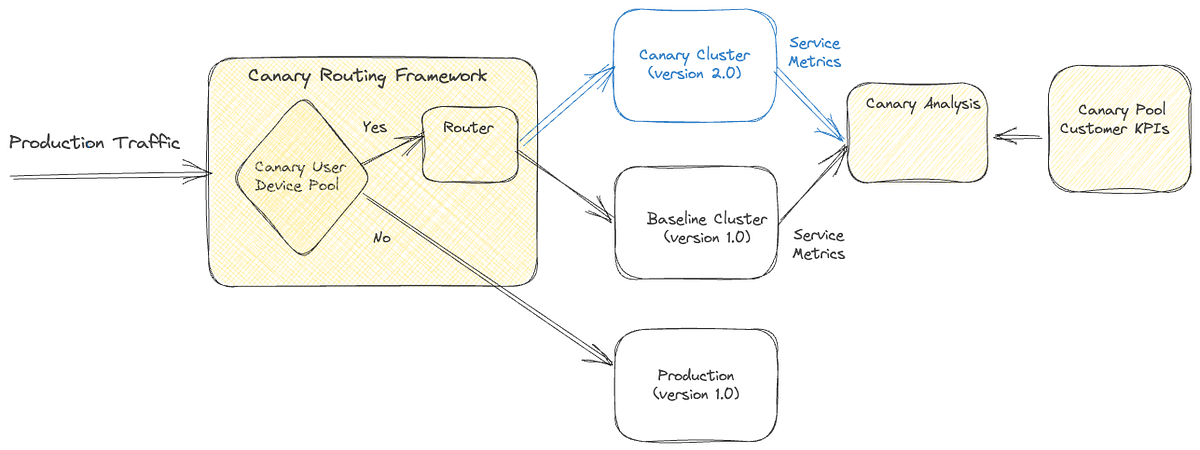

Canary deployments are an efficient mechanism for validating adjustments to a manufacturing backend service in a managed and restricted method, thus mitigating the danger of unexpected penalties which will come up because of the change. This course of includes creating two new clusters for the up to date service; a baseline cluster containing the present model working in manufacturing and a canary cluster containing the brand new model of the service. A small proportion of manufacturing visitors is redirected to the 2 new clusters, permitting us to observe the brand new model’s efficiency and examine it towards the present model. By gathering and analyzing key efficiency metrics of the service over time, we are able to assess the affect of the brand new adjustments and decide in the event that they meet the provision, latency, and efficiency necessities.

Some product options require a lifecycle of requests between the shopper machine and a set of backend companies to drive the characteristic. As an illustration, video playback performance on Netflix includes requesting URLs for the streams from a service, calling the CDN to obtain the bits from the streams, requesting a license to decrypt the streams from a separate service, and sending telemetry indicating the profitable begin of playback to yet one more service. By monitoring metrics solely on the degree of service being up to date, we’d miss capturing deviations in broader end-to-end system performance.

Sticky Canary is an enchancment to the standard canary course of that addresses this limitation. On this variation, the canary framework creates a pool of distinctive buyer units after which routes visitors for this pool persistently to the canary and baseline clusters at some point of the experiment. Aside from measuring service-level metrics, the canary framework is ready to maintain observe of broader system operational and buyer metrics throughout the canary pool and thereby detect regressions on the complete request lifecycle circulation.

You will need to word that with sticky canaries, units within the canary pool proceed to be routed to the canary all through the experiment, probably leading to undesirable conduct persisting by means of retries on buyer units. Subsequently, the canary framework is designed to observe operational and buyer KPI metrics to detect persistent deviations and terminate the canary experiment if vital.

Canaries and sticky canaries are beneficial instruments within the system migration course of. In comparison with replay testing, canaries enable us to increase the validation scope past the service degree. They allow verification of the broader end-to-end system performance throughout the request lifecycle for that performance, giving us confidence that the migration won’t trigger any disruptions to the shopper expertise. Canaries additionally present a chance to measure system efficiency beneath totally different load situations, permitting us to establish and resolve any efficiency bottlenecks. They allow us to additional fine-tune and configure the system, making certain the brand new adjustments are built-in easily and seamlessly.

A/B testing is a well known methodology for verifying hypotheses by means of a managed experiment. It includes dividing a portion of the inhabitants into two or extra teams, every receiving a unique remedy. The outcomes are then evaluated utilizing particular metrics to find out whether or not the speculation is legitimate. The trade regularly employs the method to evaluate hypotheses associated to product evolution and consumer interplay. It’s also extensively utilized at Netflix to check adjustments to product conduct and buyer expertise.

A/B testing can be a beneficial instrument for assessing vital adjustments to backend techniques. We will decide A/B check membership in both machine software or backend code and selectively invoke new code paths and companies. Inside the context of migrations, A/B testing allows us to restrict publicity to the migrated system by enabling the brand new path for a smaller proportion of the member base. Thereby controlling the danger of sudden conduct ensuing from the brand new adjustments. A/B testing can be a key method in migrations the place the updates to the structure contain altering machine contracts as properly.

Canary experiments are sometimes carried out over intervals starting from hours to days. Nevertheless, in sure cases, migration-related experiments could also be required to span weeks or months to acquire a extra correct understanding of the affect on particular High quality of Expertise (QoE) metrics. Moreover, in-depth analyses of specific enterprise Key Efficiency Indicators (KPIs) might require longer experiments. As an illustration, envision a migration state of affairs the place we improve the playback high quality, anticipating that this enchancment will result in extra prospects partaking with the play button. Assessing related metrics throughout a substantial pattern measurement is essential for acquiring a dependable and assured analysis of the speculation. A/B frameworks work as efficient instruments to accommodate this subsequent step within the confidence-building course of.

Along with supporting prolonged durations, A/B testing frameworks provide different supplementary capabilities. This strategy allows check allocation restrictions primarily based on components akin to geography, machine platforms, and machine variations, whereas additionally permitting for evaluation of migration metrics throughout related dimensions. This ensures that the adjustments don’t disproportionately affect particular buyer segments. A/B testing additionally gives adaptability, allowing changes to allocation measurement all through the experiment.

We would not use A/B testing for each backend migration. As a substitute, we use it for migrations by which adjustments are anticipated to affect machine QoE or enterprise KPIs considerably. For instance, as mentioned earlier, if the deliberate adjustments are anticipated to enhance consumer QoE metrics, we’d check the speculation by way of A/B testing.

After finishing the varied phases of validation, akin to replay testing, sticky canaries, and A/B checks, we are able to confidently assert that the deliberate adjustments won’t considerably affect SLAs (service-level-agreement), machine degree QoE, or enterprise KPIs. Nevertheless, it’s crucial that the ultimate rollout is regulated to make sure that any unnoticed and sudden issues don’t disrupt the shopper expertise. To this finish, we have now carried out visitors dialing because the final step in mitigating the danger related to enabling the adjustments in manufacturing.

A dial is a software program assemble that permits the managed circulation of visitors inside a system. This assemble samples inbound requests utilizing a distribution operate and determines whether or not they need to be routed to the brand new path or stored on the prevailing path. The choice-making course of includes assessing whether or not the distribution operate’s output aligns inside the vary of the predefined goal proportion. The sampling is finished persistently utilizing a set parameter related to the request. The goal proportion is managed by way of a globally scoped dynamic property that may be up to date in real-time. By growing or reducing the goal proportion, visitors circulation to the brand new path could be regulated instantaneously.

The choice of the particular sampling parameter will depend on the particular migration necessities. A dial can be utilized to randomly pattern all requests, which is achieved by deciding on a variable parameter like a timestamp or a random quantity. Alternatively, in eventualities the place the system path should stay fixed with respect to buyer units, a relentless machine attribute akin to deviceId is chosen because the sampling parameter. Dials could be utilized in a number of locations, akin to machine software code, the related server element, and even on the API gateway for edge API techniques, making them a flexible instrument for managing migrations in complicated techniques.

Site visitors is dialed over to the brand new system in measured discrete steps. At each step, related stakeholders are knowledgeable, and key metrics are monitored, together with service, machine, operational, and enterprise metrics. If we uncover an sudden challenge or discover metrics trending in an undesired route through the migration, the dial provides us the potential to shortly roll again the visitors to the outdated path and tackle the difficulty.

The dialing steps may also be scoped on the knowledge heart degree if visitors is served from a number of knowledge facilities. We will begin by dialing visitors in a single knowledge heart to permit for a better side-by-side comparability of key metrics throughout knowledge facilities, thereby making it simpler to watch any deviations within the metrics. The length of how lengthy we run the precise discrete dialing steps may also be adjusted. Operating the dialing steps for longer intervals will increase the likelihood of surfacing points which will solely have an effect on a small group of members or units and may need been too low to seize and carry out shadow visitors evaluation. We will full the ultimate step of migrating all of the manufacturing visitors to the brand new system utilizing the mix of gradual step-wise dialing and monitoring.

Stateful APIs pose distinctive challenges that require totally different methods. Whereas the replay testing method mentioned within the earlier a part of this weblog collection could be employed, extra measures outlined earlier are vital.

This alternate migration technique has confirmed efficient for our techniques that meet sure standards. Particularly, our knowledge mannequin is easy, self-contained, and immutable, with no relational features. Our system doesn’t require strict consistency ensures and doesn’t use database transactions. We undertake an ETL-based dual-write technique that roughly follows this sequence of steps:

- Preliminary Load by means of an ETL course of: Information is extracted from the supply knowledge retailer, reworked into the brand new mannequin, and written to the newer knowledge retailer by means of an offline job. We use customized queries to confirm the completeness of the migrated information.

- Steady migration by way of Twin-writes: We make the most of an active-active/dual-writes technique to migrate the majority of the information. As a security mechanism, we use dials (mentioned beforehand) to regulate the proportion of writes that go to the brand new knowledge retailer. To keep up state parity throughout each shops, we write all state-altering requests of an entity to each shops. That is achieved by deciding on a sampling parameter that makes the dial sticky to the entity’s lifecycle. We incrementally flip the dial up as we acquire confidence within the system whereas fastidiously monitoring its general well being. The dial additionally acts as a swap to show off all writes to the brand new knowledge retailer if vital.

- Steady verification of information: When a report is learn, the service reads from each knowledge shops and verifies the practical correctness of the brand new report if present in each shops. One can carry out this comparability stay on the request path or offline primarily based on the latency necessities of the actual use case. Within the case of a stay comparability, we are able to return information from the brand new datastore when the information match. This course of provides us an thought of the practical correctness of the migration.

- Analysis of migration completeness: To confirm the completeness of the information, chilly storage companies are used to take periodic knowledge dumps from the 2 knowledge shops and in contrast for completeness. Gaps within the knowledge are stuffed again with an ETL course of.

- Lower-over and clean-up: As soon as the information is verified for correctness and completeness, twin writes and reads are disabled, any consumer code is cleaned up, and skim/writes solely happen to the brand new knowledge retailer.

Clear-up of any migration-related code and configuration after the migration is essential to make sure the system runs easily and effectively and we don’t construct up tech debt and complexity. As soon as the migration is full and validated, all migration-related code, akin to visitors dials, A/B checks, and replay visitors integrations, could be safely faraway from the system. This consists of cleansing up configuration adjustments, reverting to the unique settings, and disabling any momentary elements added through the migration. As well as, it is very important doc the complete migration course of and maintain information of any points encountered and their decision. By performing a radical clean-up and documentation course of, future migrations could be executed extra effectively and successfully, constructing on the teachings discovered from the earlier migrations.

We now have utilized a variety of strategies outlined in our weblog posts to conduct quite a few massive, medium, and small-scale migrations on the Netflix platform. Our efforts have been largely profitable, with minimal to no downtime or vital points encountered. All through the method, we have now gained beneficial insights and refined our strategies. It ought to be famous that not the entire strategies offered are universally relevant, as every migration presents its personal distinctive set of circumstances. Figuring out the suitable degree of validation, testing, and danger mitigation requires cautious consideration of a number of components, together with the character of the adjustments, potential impacts on buyer expertise, engineering effort, and product priorities. Finally, we goal to realize seamless migrations with out disruptions or downtime.

In a collection of forthcoming weblog posts, we’ll discover a choice of particular use instances the place the strategies highlighted on this weblog collection had been utilized successfully. They may deal with a complete evaluation of the Adverts Tier Launch and an in depth GraphQL migration for varied product APIs. These posts will provide readers invaluable insights into the sensible software of those methodologies in real-world conditions.