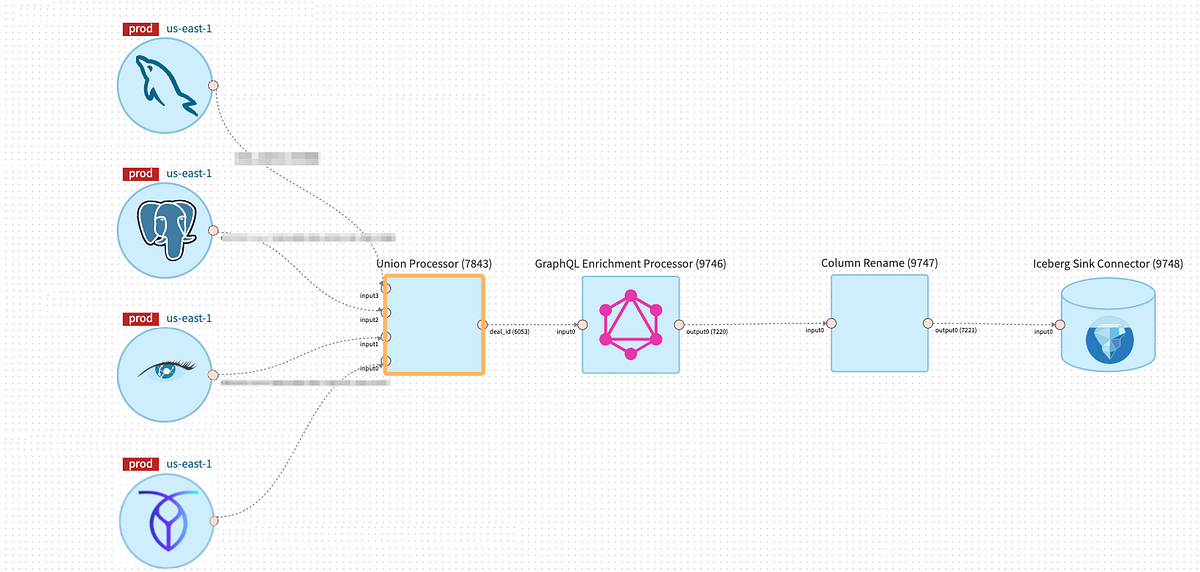

By preserving the logic of particular person Processors easy, it allowed them to be reusable so we might centrally handle and function them at scale. It additionally allowed them to be composable, so customers might mix the totally different Processors to precise the logic they wanted.

Nonetheless, this design resolution led to a distinct set of challenges.

Some groups discovered the supplied constructing blocks weren’t expressive sufficient. To be used circumstances which weren’t solvable utilizing current Processors, customers needed to categorical their enterprise logic by constructing a customized Processor. To do that, they’d to make use of the low-level DataStream API from Flink and the Knowledge Mesh SDK, which got here with a steep studying curve. After it was constructed, additionally they needed to function the customized Processors themselves.

Moreover, many pipelines wanted to be composed of a number of Processors. Since every Processor was applied as a Flink Job linked by Kafka matters, it meant there was a comparatively excessive runtime overhead price for a lot of pipelines.

We explored numerous choices to resolve these challenges, and ultimately landed on constructing the Knowledge Mesh SQL Processor that would supply extra flexibility for expressing customers’ enterprise logic.

The prevailing Knowledge Mesh Processors have quite a lot of overlap with SQL. For instance, filtering and projection could be expressed in SQL by way of SELECT and WHERE clauses. Moreover, as a substitute of implementing enterprise logic by composing a number of particular person Processors collectively, customers might categorical their logic in a single SQL question, avoiding the extra useful resource and latency overhead that got here from a number of Flink jobs and Kafka matters. Moreover, SQL can help Person Outlined Capabilities (UDFs) and customized connectors for lookup joins, which can be utilized to increase expressiveness.

Since Knowledge Mesh Processors are constructed on high of Flink, it made sense to think about using Flink SQL as a substitute of constant to construct extra Processors for each remodel operation we wanted to help.

The Knowledge Mesh SQL Processor is a platform-managed, parameterized Flink Job that takes schematized sources and a Flink SQL question that will probably be executed in opposition to these sources. By leveraging Flink SQL inside a Knowledge Mesh Processor, we had been in a position to help the streaming SQL performance with out altering the structure of Knowledge Mesh.

Beneath the hood, the Knowledge Mesh SQL Processor is applied utilizing Flink’s Desk API, which gives a robust abstraction to transform between DataStreams and Dynamic Tables. Primarily based on the sources that the processor is linked to, the SQL Processor will robotically convert the upstream sources as tables inside Flink’s SQL engine. Person’s question is then registered with the SQL engine and translated right into a Flink job graph consisting of bodily operators that may be executed on a Flink cluster. In contrast to the low-level DataStream API, customers would not have to manually construct a job graph utilizing low-level operators, as that is all managed by Flink’s SQL engine.

The SQL Processor allows customers to totally leverage the capabilities of the Knowledge Mesh platform. This contains options resembling autoscaling, the power to handle pipelines declaratively through Infrastructure as Code, and a wealthy connector ecosystem.

With the intention to guarantee a seamless person expertise, we’ve enhanced the Knowledge Mesh platform with SQL-centric options. These enhancements embrace an Interactive Question Mode, real-time question validation, and automatic schema inference.

To know how these options assist the customers be extra productive, let’s check out a typical person workflow when utilizing the Knowledge Mesh SQL Processor.

- Customers begin their journey by dwell sampling their upstream knowledge sources utilizing the Interactive Question Mode.

- Because the person iterate on their SQL question, the question validation service gives real-time suggestions in regards to the question.

- With a legitimate question, customers can leverage the Interactive Question Mode once more to execute the question and get the dwell outcomes streamed again to the UI inside seconds.

- For extra environment friendly schema administration and evolution, the platform will robotically infer the output schema primarily based on the fields chosen by the SQL question.

- As soon as the person is finished enhancing their question, it’s saved to the Knowledge Mesh Pipeline, which can then be deployed as an extended working, streaming SQL job.