by Binbing Hou, Stephanie Vezich Tamayo, Xiao Chen, Liang Tian, Troy Ristow, Haoyuan Wang, Snehal Chennuru, Pawan Dixit

That is the primary of the sequence of our work at Netflix on leveraging knowledge insights and Machine Studying (ML) to enhance the operational automation across the efficiency and value effectivity of massive knowledge jobs. Operational automation–together with however not restricted to, auto analysis, auto remediation, auto configuration, auto tuning, auto scaling, auto debugging, and auto testing–is essential to the success of recent knowledge platforms. On this weblog submit, we current our venture on Auto Remediation, which integrates the at present used rule-based classifier with an ML service and goals to robotically remediate failed jobs with out human intervention. We’ve deployed Auto Remediation in manufacturing for dealing with reminiscence configuration errors and unclassified errors of Spark jobs and noticed its effectivity and effectiveness (e.g., robotically remediating 56% of reminiscence configuration errors and saving 50% of the financial prices attributable to all errors) and nice potential for additional enhancements.

At Netflix, lots of of hundreds of workflows and thousands and thousands of jobs are operating per day throughout a number of layers of the large knowledge platform. Given the intensive scope and complicated complexity inherent to such a distributed, large-scale system, even when the failed jobs account for a tiny portion of the overall workload, diagnosing and remediating job failures may cause appreciable operational burdens.

For environment friendly error dealing with, Netflix developed an error classification service, known as Pensive, which leverages a rule-based classifier for error classification. The rule-based classifier classifies job errors based mostly on a set of predefined guidelines and gives insights for schedulers to determine whether or not to retry the job and for engineers to diagnose and remediate the job failure.

Nevertheless, because the system has elevated in scale and complexity, the rule-based classifier has been going through challenges as a result of its restricted help for operational automation, particularly for dealing with reminiscence configuration errors and unclassified errors. Due to this fact, the operational value will increase linearly with the variety of failed jobs. In some instances–for instance, diagnosing and remediating job failures attributable to Out-Of-Reminiscence (OOM) errors–joint effort throughout groups is required, involving not solely the customers themselves, but in addition the help engineers and area specialists.

To handle these challenges, we’ve got developed a brand new function, known as Auto Remediation, which integrates the rule-based classifier with an ML service. Primarily based on the classification from the rule-based classifier, it makes use of an ML service to foretell retry success chance and retry value and selects the very best candidate configuration as suggestions; and a configuration service to robotically apply the suggestions. Its main benefits are under:

- Built-in intelligence. As an alternative of fully deprecating the present rule-based classifier, Auto Remediation integrates the classifier with an ML service in order that it could actually leverage the deserves of each: the rule-based classifier gives static, deterministic classification outcomes per error class, which relies on the context of area specialists; the ML service gives performance- and cost-aware suggestions per job, which leverages the ability of ML. With the built-in intelligence, we will correctly meet the necessities of remediating totally different errors.

- Absolutely automated. The pipeline of classifying errors, getting suggestions, and making use of suggestions is totally automated. It gives the suggestions along with the retry choice to the scheduler, and notably makes use of a web-based configuration service to retailer and apply advisable configurations. On this method, no human intervention is required within the remediation course of.

- Multi-objective optimizations. Auto Remediation generates suggestions by contemplating each efficiency (i.e., the retry success chance) and compute value effectivity (i.e., the financial prices of operating the job) to keep away from blindly recommending configurations with extreme useful resource consumption. For instance, for reminiscence configuration errors, it searches a number of parameters associated to the reminiscence utilization of job execution and recommends the mix that minimizes a linear mixture of failure chance and compute value.

These benefits have been verified by the manufacturing deployment for remediating Spark jobs’ failures. Our observations point out that Auto Remediation can efficiently remediate about 56% of all reminiscence configuration errors by making use of the advisable reminiscence configurations on-line with out human intervention; and in the meantime cut back the price of about 50% as a result of its skill to advocate new configurations to make reminiscence configurations profitable and disable pointless retries for unclassified errors. We’ve additionally famous an excellent potential for additional enchancment by mannequin tuning (see the part of Rollout in Manufacturing).

Fundamentals

Determine 1 illustrates the error classification service, i.e., Pensive, within the knowledge platform. It leverages the rule-based classifier and consists of three elements:

- Log Collector is chargeable for pulling logs from totally different platform layers for error classification (e.g., the scheduler, job orchestrator, and compute clusters).

- Rule Execution Engine is chargeable for matching the collected logs in opposition to a set of predefined guidelines. A rule contains (1) the identify, supply, log, and abstract, of the error and whether or not the error is restartable; and (2) the regex to establish the error from the log. For instance, the rule with the identify SparkDriverOOM contains the data indicating that if the stdout log of a Spark job can match the regex SparkOutOfMemoryError:, then this error is assessed to be a consumer error, not restartable.

- Outcome Finalizer is chargeable for finalizing the error classification end result based mostly on the matched guidelines. If one or a number of guidelines are matched, then the classification of the primary matched rule determines the ultimate classification end result (the rule precedence is decided by the rule ordering, and the primary rule has the best precedence). Alternatively, if no guidelines are matched, then this error will likely be thought of unclassified.

Challenges

Whereas the rule-based classifier is easy and has been efficient, it’s going through challenges as a result of its restricted skill to deal with the errors attributable to misconfigurations and classify new errors:

- Reminiscence configuration errors. The foundations-based classifier gives error classification outcomes indicating whether or not to restart the job; nonetheless, for non-transient errors, it nonetheless depends on engineers to manually remediate the job. Probably the most notable instance is reminiscence configuration errors. Such errors are typically attributable to the misconfiguration of job reminiscence. Setting an excessively small reminiscence can lead to Out-Of-Reminiscence (OOM) errors whereas setting an excessively giant reminiscence can waste cluster reminiscence sources. What’s tougher is that some reminiscence configuration errors require altering the configurations of a number of parameters. Thus, setting a correct reminiscence configuration requires not solely the handbook operation but in addition the experience of Spark job execution. As well as, even when a job’s reminiscence configuration is initially effectively tuned, modifications akin to knowledge measurement and job definition may cause efficiency to degrade. Provided that about 600 reminiscence configuration errors monthly are noticed within the knowledge platform, well timed remediation of reminiscence configuration errors alone requires non-trivial engineering efforts.

- Unclassified errors. The rule-based classifier depends on knowledge platform engineers to manually add guidelines for recognizing errors based mostly on the identified context; in any other case, the errors will likely be unclassified. As a result of migrations of various layers of the information platform and the variety of functions, current guidelines will be invalid, and including new guidelines requires engineering efforts and in addition will depend on the deployment cycle. Greater than 300 guidelines have been added to the classifier, but about 50% of all failures stay unclassified. For unclassified errors, the job could also be retried a number of instances with the default retry coverage. If the error is non-transient, these failed retries incur pointless job operating prices.

Methodology

To handle the above-mentioned challenges, our primary methodology is to combine the rule-based classifier with an ML service to generate suggestions, and use a configuration service to use the suggestions robotically:

- Producing suggestions. We use the rule-based classifier as the primary cross to categorise all errors based mostly on predefined guidelines, and the ML service because the second cross to supply suggestions for reminiscence configuration errors and unclassified errors.

- Making use of suggestions. We use a web-based configuration service to retailer and apply the advisable configurations. The pipeline is totally automated, and the companies used to generate and apply suggestions are decoupled.

Service Integrations

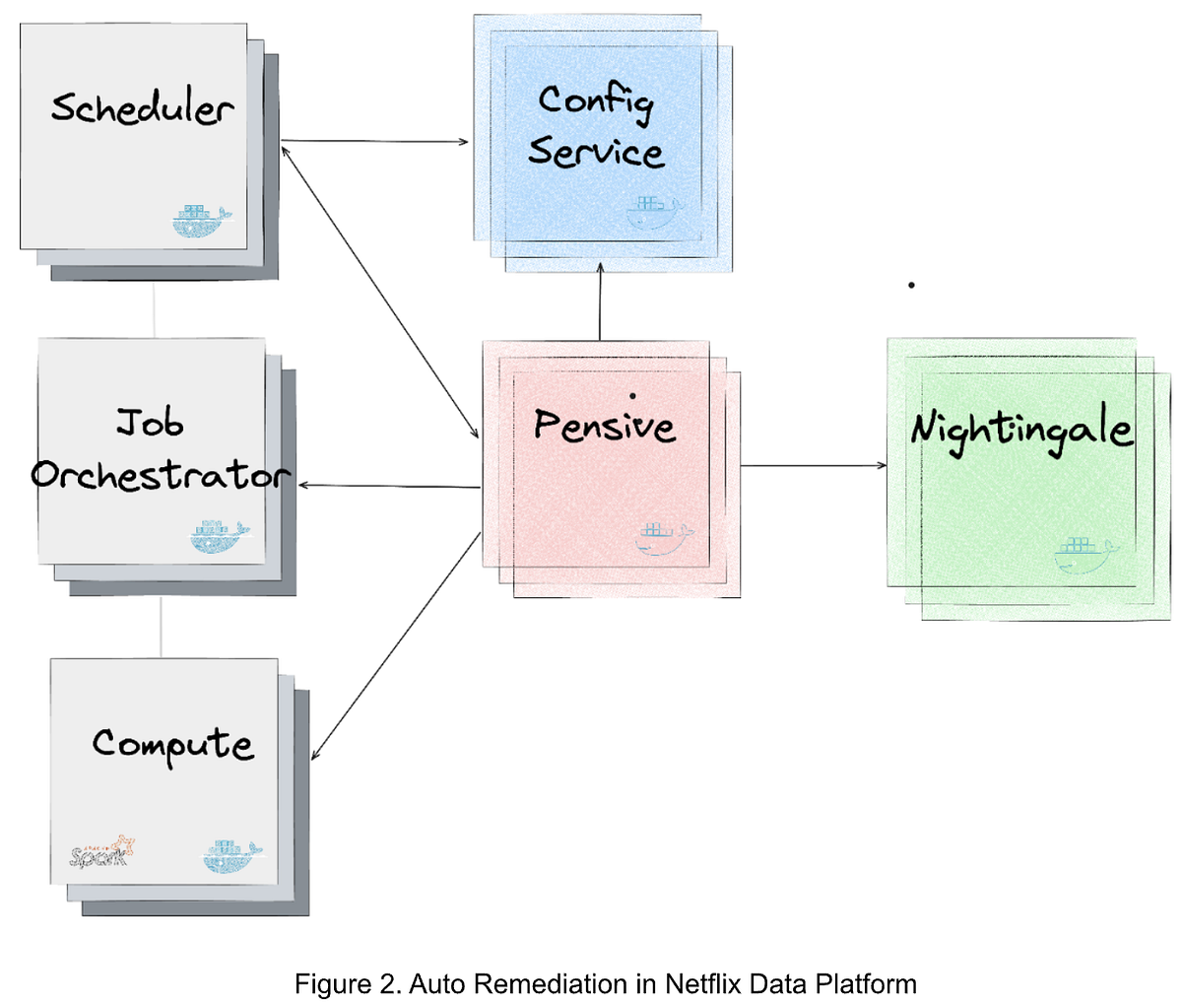

Determine 2 illustrates the mixing of the companies producing and making use of the suggestions within the knowledge platform. The most important companies are as follows:

- Nightingale is a service operating the ML mannequin educated utilizing Metaflow and is chargeable for producing a retry suggestion. The advice contains (1) whether or not the error is restartable; and (2) in that case, the advisable configurations to restart the job.

- ConfigService is a web-based configuration service. The advisable configurations are saved in ConfigService as a JSON patch with a scope outlined to specify the roles that may use the advisable configurations. When Scheduler calls ConfigService to get advisable configurations, Scheduler passes the unique configurations to ConfigService and ConfigService returns the mutated configurations by making use of the JSON patch to the unique configurations. Scheduler can then restart the job with the mutated configurations (together with the advisable configurations).

- Pensive is an error classification service that leverages the rule-based classifier. It calls Nightingale to get suggestions and shops the suggestions to ConfigService in order that it may be picked up by Scheduler to restart the job.

- Scheduler is the service scheduling jobs (our present implementation is with Netflix Maestro). Every time when a job fails, it calls Pensive to get the error classification to determine whether or not to restart a job and calls ConfigServices to get the advisable configurations for restarting the job.

Determine 3 illustrates the sequence of service calls with Auto Remediation:

- Upon a job failure, Scheduler calls Pensive to get the error classification.

- Pensive classifies the error based mostly on the rule-based classifier. If the error is recognized to be a reminiscence configuration error or an unclassified error, it calls Nightingale to get suggestions.

- With the obtained suggestions, Pensive updates the error classification end result and saves the advisable configurations to ConfigService; after which returns the error classification end result to Scheduler.

- Primarily based on the error classification end result obtained from Pensive, Scheduler determines whether or not to restart the job.

- Earlier than restarting the job, Scheduler calls ConfigService to get the advisable configuration and retries the job with the brand new configuration.

Overview

The ML service, i.e., Nightingale, goals to generate a retry coverage for a failed job that trades off between retry success chance and job operating prices. It consists of two main elements:

- A prediction mannequin that collectively estimates a) chance of retry success, and b) retry value in {dollars}, conditional on properties of the retry.

- An optimizer which explores the Spark configuration parameter area to advocate a configuration which minimizes a linear mixture of retry failure chance and value.

The prediction mannequin is retrained offline day by day, and is named by the optimizer to judge every candidate set of configuration parameter values. The optimizer runs in a RESTful service which is named upon job failure. If there’s a possible configuration answer from the optimization, the response contains this suggestion, which ConfigService makes use of to mutate the configuration for the retry. If there is no such thing as a possible answer–in different phrases, it’s unlikely the retry will succeed by altering Spark configuration parameters alone–the response features a flag to disable retries and thus remove wasted compute value.

Prediction Mannequin

Provided that we wish to discover how retry success and retry value may change below totally different configuration situations, we’d like some method to predict these two values utilizing the data we’ve got in regards to the job. Knowledge Platform logs each retry success end result and execution value, giving us dependable labels to work with. Since we use a shared function set to foretell each targets, have good labels, and must run inference rapidly on-line to satisfy SLOs, we determined to formulate the issue as a multi-output supervised studying job. Specifically, we use a easy Feedforward Multilayer Perceptron (MLP) with two heads, one to foretell every end result.

Coaching: Every report within the coaching set represents a possible retry which beforehand failed as a result of reminiscence configuration errors or unclassified errors. The labels are: a) did retry fail, b) retry value. The uncooked function inputs are largely unstructured metadata in regards to the job such because the Spark execution plan, the consumer who ran it, and the Spark configuration parameters and different job properties. We break up these options into these that may be parsed into numeric values (e.g., Spark executor reminiscence parameter) and people who can’t (e.g., consumer identify). We used function hashing to course of the non-numeric values as a result of they arrive from a excessive cardinality and dynamic set of values. We then create a decrease dimensionality embedding which is concatenated with the normalized numeric values and handed via a number of extra layers.

Inference: Upon passing validation audits, every new mannequin model is saved in Metaflow Internet hosting, a service supplied by our inner ML Platform. The optimizer makes a number of calls to the mannequin prediction perform for every incoming configuration suggestion request, described in additional element under.

Optimizer

When a job try fails, it sends a request to Nightingale with a job identifier. From this identifier, the service constructs the function vector for use in inference calls. As described beforehand, a few of these options are Spark configuration parameters that are candidates to be mutated (e.g., spark.executor.reminiscence, spark.executor.cores). The set of Spark configuration parameters was based mostly on distilled information of area specialists who work on Spark efficiency tuning extensively. We use Bayesian Optimization (applied through Meta’s Ax library) to discover the configuration area and generate a suggestion. At every iteration, the optimizer generates a candidate parameter worth mixture (e.g., spark.executor.reminiscence=7192 mb, spark.executor.cores=8), then evaluates that candidate by calling the prediction mannequin to estimate retry failure chance and value utilizing the candidate configuration (i.e., mutating their values within the function vector). After a hard and fast variety of iterations is exhausted, the optimizer returns the “greatest” configuration answer (i.e., that which minimized the mixed retry failure and value goal) for ConfigService to make use of whether it is possible. If no possible answer is discovered, we disable retries.

One draw back of the iterative design of the optimizer is that any bottleneck can block completion and trigger a timeout, which we initially noticed in a non-trivial variety of instances. Upon additional profiling, we discovered that a lot of the latency got here from the candidate generated step (i.e., determining which instructions to step within the configuration area after the earlier iteration’s analysis outcomes). We discovered that this challenge had been raised to Ax library homeowners, who added GPU acceleration choices of their API. Leveraging this selection decreased our timeout fee considerably.

We’ve deployed Auto Remediation in manufacturing to deal with reminiscence configuration errors and unclassified errors for Spark jobs. Moreover the retry success chance and value effectivity, the influence on consumer expertise is the key concern:

- For reminiscence configuration errors: Auto remediation improves consumer expertise as a result of the job retry isn’t profitable with no new configuration for reminiscence configuration errors. Because of this a profitable retry with the advisable configurations can cut back the operational hundreds and save job operating prices, whereas a failed retry doesn’t make the consumer expertise worse.

- For unclassified errors: Auto remediation recommends whether or not to restart the job if the error can’t be categorised by current guidelines within the rule-based classifier. Specifically, if the ML mannequin predicts that the retry could be very prone to fail, it’ll advocate disabling the retry, which may save the job operating prices for pointless retries. For instances through which the job is business-critical and the consumer prefers at all times retrying the job even when the retry success chance is low, we will add a brand new rule to the rule-based classifier in order that the identical error will likely be categorised by the rule-based classifier subsequent time, skipping the suggestions of the ML service. This presents some great benefits of the built-in intelligence of the rule-based classifier and the ML service.

The deployment in manufacturing has demonstrated that Auto Remediation can present efficient configurations for reminiscence configuration errors, efficiently remediating about 56% of all reminiscence configuration with out human intervention. It additionally decreases compute value of those jobs by about 50% as a result of it could actually both advocate new configurations to make the retry profitable or disable pointless retries. As tradeoffs between efficiency and value effectivity are tunable, we will determine to realize a better success fee or extra value financial savings by tuning the ML service.

It’s value noting that the ML service is at present adopting a conservative coverage to disable retries. As mentioned above, that is to keep away from the influence on the instances that customers favor at all times retrying the job upon job failures. Though these instances are anticipated and will be addressed by including new guidelines to the rule-based classifier, we think about tuning the target perform in an incremental method to regularly disable extra retries is useful to supply fascinating consumer expertise. Given the present coverage to disable retries is conservative, Auto Remediation presents an excellent potential to finally deliver rather more value financial savings with out affecting the consumer expertise.

Auto Remediation is our first step in leveraging knowledge insights and Machine Studying (ML) for enhancing consumer expertise, lowering the operational burden, and enhancing value effectivity of the information platform. It focuses on automating the remediation of failed jobs, but in addition paves the trail to automate operations aside from error dealing with.

One of many initiatives we’re taking, known as Proper Sizing, is to reconfigure scheduled huge knowledge jobs to request the right sources for job execution. For instance, we’ve got famous that the typical requested executor reminiscence of Spark jobs is about 4 instances their max used reminiscence, indicating a big overprovision. Along with the configurations of the job itself, the useful resource overprovision of the container that’s requested to execute the job will also be lowered for value financial savings. With heuristic- and ML-based strategies, we will infer the right configurations of job execution to reduce useful resource overprovisions and save thousands and thousands of {dollars} per 12 months with out affecting the efficiency. Much like Auto Remediation, these configurations will be robotically utilized through ConfigService with out human intervention. Proper Sizing is in progress and will likely be lined with extra particulars in a devoted technical weblog submit later. Keep tuned.

Auto Remediation is a joint work of the engineers from totally different groups and organizations. This work would haven’t been attainable with out the stable, in-depth collaborations. We wish to recognize all of us, together with Spark specialists, knowledge scientists, ML engineers, the scheduler and job orchestrator engineers, knowledge engineers, and help engineers, for sharing the context and offering constructive solutions and invaluable suggestions (e.g., John Zhuge, Jun He, Holden Karau, Samarth Jain, Julian Jaffe, Batul Shajapurwala, Michael Sachs, Faisal Siddiqi).